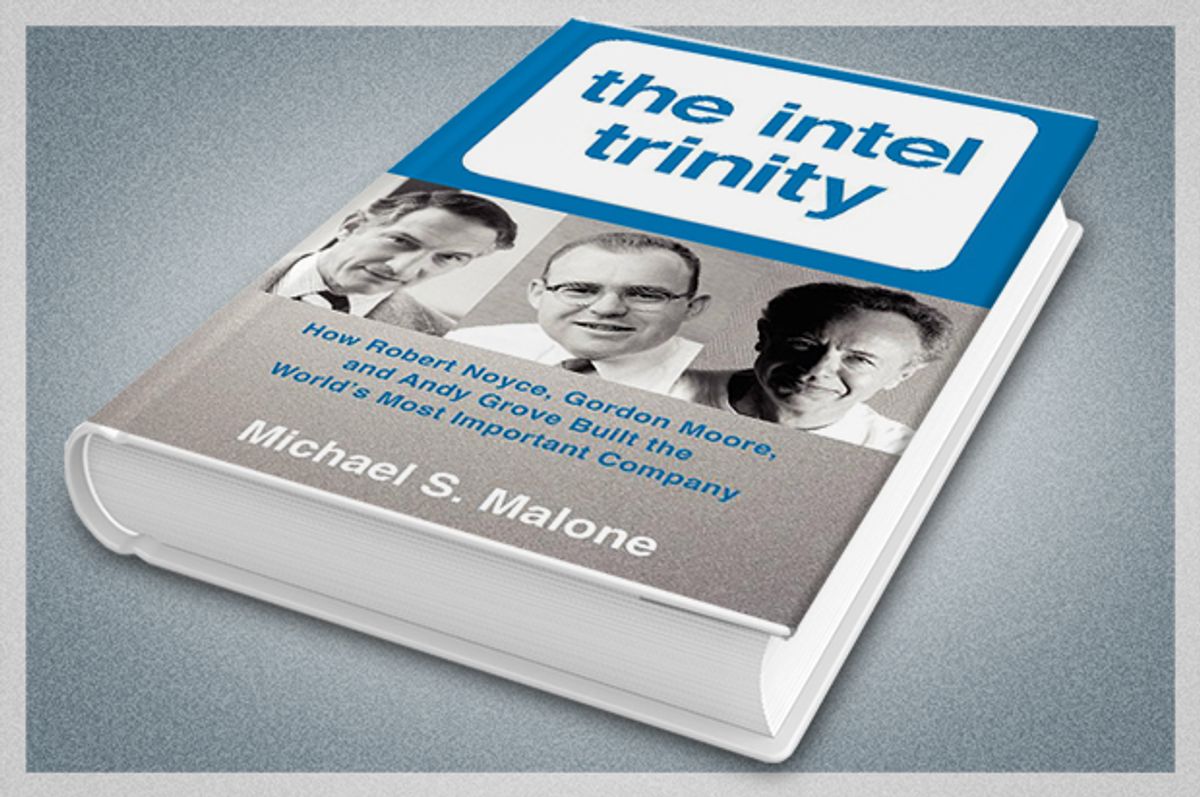

Here are some reasons why I didn't think I needed to read Michael Malone's "The Intel Trinity: How Robert Noyce, Gordon Moore, and Andy Grove Built the World's Most Important Company." I did not need to read, one more time, about the legendary departure of the "Traitorous Eight" from Nobel Prize winner William Shockley's pioneering Silicon Valley transistor firm -- the inspiration for generations of Silicon Valley engineers to jump ship and start up their own shop. I also didn't need to be told, once more, about how Gordon Moore came up with "Moore's Law" -- his famous prediction that computer chip performance would double every 18 months. And I certainly didn't need to reacquaint myself with how Andy Grove's childhood in Hungary during World War II and under the Soviet thumb contributed to the kind of hard-charging relentless paranoia necessary to "build the world's most important company."

Those are all good and fascinating stories, and nobody knows the history of the chip industry better than longtime tech journalist Michael Malone. So if you don't know them, then "The Intel Trinity" is a fine introduction to the founding myths legends of Silicon Valley. But these tales have been told many times before. I decided to read "The Intel Trinity" because I was looking for something a little different. Love it or hate it, Silicon Valley, right now, has never enjoyed more political, cultural or economic influence. From whence did this power spring? The case can easily be made that Noyce, Moore and Grove were as responsible as anyone for how the Valley currently conducts itself. Their DNA lives on, in the venture capital companies that line Sand Hill Road, in the board rooms of Facebook and Google. So it seemed not just worthwhile to get a refresher course in how it all happened -- it also seemed crucially important. Silicon Valley's success today is fraught with complexities that few people, outside of science fiction writers, imagined when the first microprocessor chips started rolling out of fabrication plants. Myths and legends take on a different hue when their inheritors transform from canny geeks to masters of the universe. It's more pressing than ever to figure out how we got here, from there.

In a Valley swamped by self-importance, it would also be easy to accuse Michael Malone of his own over-reaching hubris when you realize that his title, "The Intel Trinity," is a direct play on the Father, the Son and the Holy Spirit. Really? Are we actually supposed to get down on our knees and worship these guys? But then again, there's no getting around it: the silicon chip has drastically changed our world. John Lennon overstepped when he boasted the Beatles were bigger than Jesus. But if Robert Noyce, the founder of both Fairchild Semiconductor and Intel, had said the same thing about the first microprocessor, he wouldn't have been wrong. Silicon Valley arrogance does not come from nowhere. It is embedded, like millions of miniaturized transistors, in the holy silicon wafer.

But this is not necessarily a permanent state of affairs. If there is one great lesson from "The Intel Trinity," it is that nothing about the Valley is set in stone. Even Moore's Law! As Malone stresses at numerous points, Moore's Law is not actually a law. There are no physical or economic realities that mandate that chips will keep getting smaller and cheaper. Moore's Law, Malone explains, was also a promise: "a unique cultural contract made between the semiconductor industry and the rest of the world to double chip performance every couple of years and thus usher in an era of continuous, rapid technological innovation and the life-changing products that innovation produced."

More than any other single company, Intel delivered on that promise. Intel catalyzed the modern era. The trinity of Noyce, Moore and Grove are responsible for the pervading psychological tenor of our times, in which change is the only constant.

That's a pretty big deal -- and well worth revisiting.

***

The opening paragraph of "Cramming More Components onto Integrated Circuits" by Gordon Moore, published on April 19, 1965, is one of the better technology forecasting documents ever printed.

"The future of integrated electronics is the future of electronics itself. The advantages of integration will bring about a proliferation of electronics, pushing this science into many new areas. Integrated circuits will lead to such wonders as home computers -- or at least terminals connected to a central computer -- automatic controls for automobiles, and personal portable communications equipment...."

42 years later, Apple launched the iPhone. For which Steve Jobs gets a lot of credit. And which, of course , would be about as useful as a brick without the deployment of the Internet and the invention of myriad cool software programs. But none of it happens without Moore's Law, without the relentless advance of the hardware innards that make it all go. There's no Facebook, no sharing economy, no YouTube, no Amazon and no Google without the chip. And of course, there's no Silicon Valley without the silicon, something that's awfully easy to forget as our attention is distracted by potato salad Kickstarter campaigns and public parking space auction apps and whatever Marc Andreessen is tweeting about.

Today, confidence in the fact that the phone that will be released by Samsung or Apple next month is guaranteed to be substantially more powerfully than the one that came out last month (or there will be hell to pay!) is built into how we perceive the basic nature of our existence. Indeed, we get kind of frustrated when we don't see Moore's Law applying to things like, say, solar power or gas mileage or battery longevity. We have been conditioned, with respect to technology, to expect destabilizing change -- it's all that millennials have ever known.

By the beginning of the twenty-first century, historians had begun to appreciate that Moore's Law wasn't just a measurement of innovation in the semiconductor business or even the entire electronics industry, but it was also a kind of metronome of the modern world.

But the subtext of Malone's history of Intel is that it didn't necessarily have to happen this way. Intel built itself into one of the world's most dominant companies in part by sheer force of will, through the determination of its brilliant top executives to keep pouring money into research and development into the next line of chips through both economic boom and bust. Moore himself originally predicted his law would remain in force for no more than ten years -- the fact that forty years later it is still going strong is amazing, but it doesn't mean that forty years from now, the pace will not have faltered. The Technological Singularity is not a done deal. It won't happen by itself, it will happen because humans continue to exert their will.

Malone calls Moore's Law "Silicon Valley's greatest legacy ... a commitment to perpetual progress." He sees Intel as "ultimate protectors of the sacred flame," as a company that "never shirked its immense responsibility, and always took seriously its stewardship of Moore's Law, the essential dynamo of modern life."

But the book ends on something of a down note. The successors to Andy Grove, who retired in 1998, don't appear to have demonstrated the same sterling track record as their predecessors. For one thing -- and this kind of a biggie -- Intel failed to stay on top of the shift to mobile. There's no Intel microprocessor inside your iPhone.

Industry-wide, the basic dynamics of Moore's Law still seem in place, but the changing of the guard appears to have precipitated a fundamental change in Intel itself. And that's a useful lesson to absorb for anyone interested in predicting how and whether Silicon Valley keeps marching relentlessly forward at the same pace in decades past. The people who run these companies, who deploy these innovations and design these chips and program those apps -- those people make a difference. Bob Noyce, writes Michael Malone, cared more about ushering in the digital age than he did about money.

But what does Mark Zuckerberg want? What does Jeff Bezos want? The answers to those questions are not so clear.

Shares