The rocket-quick rise of racial politics leveled off briefly in the 1970s, before shooting upward again. In good part because of racial appeals, the Republican Party had transformed the crushing defeat of Barry Goldwater into the overwhelming re-election of Richard Nixon. Then, in the 1976 presidential race, the defection toward the Republicans temporarily decelerated. Revulsion over corruption in the Nixon White House, revealed in the Watergate scandal, played a role. In addition, in an effort to distance himself from Nixon’s dirty tricks, the Republican candidate and former Nixon vice president, Gerald Ford, refused to exploit coded racial appeals in his campaign. Not that this marked the disappearance of race-baiting; instead, it merely shifted to Ford’s opponent, former Georgia governor Jimmy Carter. Carter was a racial moderate, and today he deservedly enjoys a reputation as a great humanitarian. Nevertheless, in the mid-1970s he knew that his political fortunes turned on his ability to attract Wallace voters in the South and the North as well. Campaigning in Indiana in April 1976, Carter forcefully opposed neighborhood integration:

I have nothing against a community that’s made up of people who are Polish or Czechoslovakian or French-Canadian, or who are blacks trying to maintain the ethnic purity of their neighborhoods. This is a natural inclination on the part of the people. I don’t think government ought to deliberately try to break down an ethnically oriented neighborhood by artificially injecting into it someone from another ethnic group just to create some form of integration.

Carter adopted an emerging technique in the 1970s, hiding references to whites behind talk of ethnic subpopulations, and he also presented blacks as trying to preserve their own segregated neighborhoods. Notwithstanding these dissimulations, few could fail to understand that Carter was defending white efforts to oppose racial integration, and many liberals criticized Carter for doing so. Nixon, who had been loudly berated by Democrats when he announced that neighborhood integration was not in the national interest, surely appreciated the spectacle. As Carter, too, came under attack, he apologized for using the term “ethnic purity,” but made a point of reiterating on national news that “the government shouldn’t actively try to force changes in neighborhoods with their own ethnic character.”

Carter won the presidency in 1976 with 48 percent of the white vote, sharply better than the Democratic presidential candidate four years earlier who had pulled support from only 30 percent of white voters. But even with widespread revulsion at Nixon as well as Carter’s own Southern strategy, Carter did not manage to carry the white vote nationally. It was his 90 percent support among African Americans, many still furious at Nixon’s dog whistling, that put Carter over the top. In the mid-1970s, racial realignment in party affiliation had been temporarily slowed, not knocked down. Moreover, Carter’s racial pandering— and Ford’s principled failure—seemed to cement the political logic of racebaiting. In the 1980 campaign, Ronald Reagan would come out firing on racial issues, and would blast past Carter. Just 36 percent of whites, only slightly better than one in three, voted for Carter in 1980.

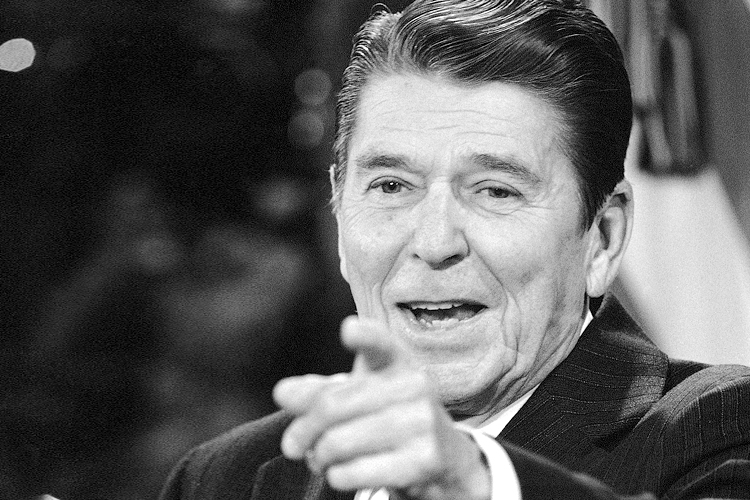

Ronald Reagan

Why did Ronald Reagan do so well among white voters? Certainly elements beyond race contributed, including the faltering economy, foreign events (especially in Iran), the nation’s mood, and the candidates’ temperaments. But one indisputable factor was the return of aggressive race-baiting. A year after Reagan’s victory, a key operative gave what was then an anonymous interview, and perhaps lulled by the anonymity, he offered an unusually candid response to a question about Reagan, the Southern strategy, and the drive to attract the “Wallace voter”:

You start out in 1954 by saying, “N—, n—, n—.” [Editor’s note: The actual word used by Atwater has been replaced with “N—” for the purposes of this article.] By 1968 you can’t say “n—” — that hurts you. Backfires. So you say stuff like forced busing, states’ rights and all that stuff. You’re getting so abstract now, you’re talking about cutting taxes, and all these things you’re talking about are totally economic things and a byproduct of them is, blacks get hurt worse than whites. And subconsciously maybe that is part of it. I’m not saying that. But I’m saying that if it is getting that abstract, and that coded, that we are doing away with the racial problem one way or the other. You follow me—because obviously sitting around saying, “We want to cut taxes and we want to cut this,” is much more abstract than even the busing thing, and a hell of a lot more abstract than “N—, n—.” So anyway you look at it, race is coming on the back burner.

This analysis was provided by a young Lee Atwater. Its significance is two fold: First, it offers an unvarnished account of Reagan’s strategy. Second, it reveals the thinking of Atwater himself, someone whose career traced the rise of GOP dog whistle politics. A protégé of the pro-segregationist Strom Thurmond in South Carolina, the young Atwater held Richard Nixon as a personal hero, even describing Nixon’s Southern strategy as “a blue print for everything I’ve done.” After assisting in Reagan’s initial victory, Atwater became the political director of Reagan’s 1984 campaign, the manager of George Bush’s 1988 presidential campaign, and eventually the chair of the Republican National Committee. In all of these capacities, he drew on the quick sketch of dog whistle politics he had offered in 1981: from “n—, n—, n—” to “states’ rights” and “forced busing,” and from there to “cutting taxes”—and linking all of these, “race . . . coming on the back burner.”

When Reagan picked up the dog whistle in 1980, the continuity in technique nevertheless masked a crucial difference between him versus Wallace and Nixon. Those two had used racial appeals to get elected, yet their racially reactionary language did not match reactionary political positions. Political moderates, both became racial demagogues when it became clear that this would help win elections. Reagan was different. Unlike Wallace and Nixon, Reagan was not a moderate, but an old-time Goldwater conservative in both the ideological and racial senses, with his own intuitive grasp of the power of racial provocation. For Reagan, conservatism and racial resentment were inextricably fused.

In the early 1960s, Reagan was still a minor actor in Hollywood, but he was becoming increasingly active in conservative politics. When Goldwater decided to run for president, Reagan emerged as a fierce partisan. Reagan’s advocacy included a stock speech, given many times over, that drummed up support for Goldwater with overwrought balderdash such as the following: “We are

faced with the most evil enemy mankind has known in his long climb from the swamp to the stars. There can be no security anywhere in the free world if there is no fiscal and economic stability within the United States. Those who ask us to trade our freedom for the soup kitchen of the welfare state are architects of a policy of accommodation.” Reagan’s rightwing speechifying didn’t save Goldwater, but it did earn Reagan a glowing reputation among Republican groups in California, which led to his being recruited to run for governor of California in 1966. During that campaign, he wed his fringe politics to early dog whistle themes, for instance excoriating welfare, calling for law and order, and opposing government efforts to promote neighborhood integration. He also signaled blatant hostility toward civil rights, supporting a state ballot initiative to allow racial discrimination in the housing market, proclaiming: “If an individual wants to discriminate against Negroes or others in selling or renting his house, it is his right to do so.”

Reagan’s race-baiting continued when he moved to national politics. After securing the Republican nomination in 1980, Reagan launched his official campaign at a county fair just outside Philadelphia, Mississippi, the town still notorious in the national imagination for the Klan lynching of civil rights volunteers James Chaney, Andrew Goodman, and Michael Schwerner 16 years earlier. Reagan selected the location on the advice of a local official, who had written to the Republican National Committee assuring them that the Neshoba County Fair was an ideal place for winning “George Wallace inclined voters.” Neshoba did not disappoint. The candidate arrived to a raucous crowd of perhaps 10,000 whites chanting “We want Reagan! We want Reagan!”—and he returned their fevered embrace by assuring them, “I believe in states’ rights.” In 1984, Reagan came back, this time to endorse the neo-Confederate slogan “the South shall rise again.” As New York Times columnist Bob Herbert concludes, “Reagan may have been blessed with a Hollywood smile and an avuncular delivery, but he was elbow deep in the same old race-baiting Southern strategy of Goldwater and Nixon.”

Reagan also trumpeted his racial appeals in blasts against welfare cheats. On the stump, Reagan repeatedly invoked a story of a “Chicago welfare queen” with “eighty names, thirty addresses, [and] twelve Social Security cards [who] is collecting veteran’s benefits on four non-existing deceased husbands. She’s got Medicaid, getting food stamps, and she is collecting welfare under each of her names. Her tax-free cash income is over $150,000.” Often, Reagan placed his mythical welfare queen behind the wheel of a Cadillac, tooling around in flashy splendor. Beyond propagating the stereotypical image of a lazy, larcenous black woman ripping off society’s generosity without remorse, Reagan also implied

another stereotype, this one about whites: they were the workers, the tax payers, the persons playing by the rules and struggling to make ends meet while brazen minorities partied with their hard-earned tax dollars. More directly placing the white voter in the story, Reagan frequently elicited supportive outrage by criticizing the food stamp program as helping “some young fellow ahead of you to buy a T-bone steak” while “you were waiting in line to buy hamburger.” This was the toned-down version. When he first field-tested the message in the South, that “young fellow” was more particularly described as a “strapping young buck.” The epithet “buck” has long been used to conjure the threatening image of a physically powerful black man often one who defies white authority and who lusts for white women. When Reagan used the term “strapping young buck,” his whistle shifted dangerously toward the fully audible range. “Some young fellow” was less overtly racist and so carried less risk of censure, and worked just as well to provoke a sense of white victimization.

Voters heard Reagan’s dog whistle. In 1980, “Reagan’s racially coded rhetoric and strategy proved extraordinarily effective, as 22 percent of all Democrats defected from the party to vote for Reagan.” Illustrating the power of race in the campaign, “the defection rate shot up to 34 percent among those Democrats who believed civil rights leaders were pushing too fast.” Among those who felt “the government should not make any special effort to help [blacks] because they should help themselves,” 71 percent voted for Reagan.

Goldwater’s revenge?

Today Reagan is a folk-hero of the right and center and is so widely popular that Barack Obama often feels obliged to invoke Reagan’s name reverentially. Why this obeisance to Reagan? At least partly it reflects a sense widely shared among liberals that the United States is historically and at heart a conservative country, requiring genuflection at the feet of conservative icons. For an example of this liberal belief in the country’s bedrock conservatism, consider an essay published several weeks before the 2012 presidential election, when the portents indicated an uncertain Democratic victory. Editorialist Frank Rich argued that whether Obama won or lost, conservatism would triumph in the end: “This is a nation that loathes government and always has. Liberals should not be deluded: The Goldwater revolution will ultimately triumph, regardless of what happens in November.” Is Rich right? Was Reagan a first step away from the exceptional politics of the New Deal era and back toward a more fundamentally conservative America? Are we at root a conservative country, moving inexorably in the direction of Goldwater’s repudiation of liberal governance?

The simplest way to answer this question may be to look at public attitudes toward government’s role in solving major social problems. In 2009, the political scientists Benjamin Page and Lawrence Jacobs exhaustively reviewed survey data on American attitudes toward activist government, compiling the results in a book entitled Class War? What Americans Really Think about Economic Inequality. Here are some of their findings:

- 87 percent of the public agrees that government should spend whatever is necessary to ensure that all children have really good public schools they can go to.

- 67 percent agree that the government in Washington ought to see to it that everyone who wants to work can find a job.

- 66 percent agree that the Social Security system should ensure a minimum standard of living to all contributors.

- 73 percent agree it is the responsibility of the federal government to make sure all Americans have health care coverage.

- 68 percent agree that government must see that no one is without food, clothing, or shelter.

- 78 percent favor their own tax dollars being used to help pay for food stamps and other assistance for the poor.

These hardly come across as the cold-hearted responses of a conservative polity wedded to Goldwaterite principles. Instead it is Lyndon Johnson’s vision, not Goldwater’s, that seems represented even today in the above opinions.

When it comes to the role of government in offering a helping hand and moderating capitalism, we are a fundamentally liberal country, one committed to the principle that we’re all in this together. Is it plausible that, from Johnson’s decisive triumph in 1964 to Nixon’s landslide re-election in 1972, the United States did a sudden about-face regarding liberal government? I suspect rather that Nixon’s win—and Reagan’s, and that of the other conservative dog whistlers—more resembles Goldwater’s peculiar victory in the Deep South. There, whites committed to the New Deal nevertheless allowed racial entreaties to bamboozle them into voting for an anti-New Deal candidate. Since 1972, we seem to have witnessed the Republicans proving out that when it comes to racial resentment, “the whole United States is Southern.” Like Goldwater, dog whistlers seem to win more on the strength of racism than conservatism. We should not confuse current antagonism toward government with an enduring rightwing national zeitgeist. Instead, we should have confidence in the liberal “we’re all in this together” ethos of the American people, even as we recognize the power of race to produce self-destructive voting patterns.

Here’s another version of the same conversation, this one focused on explaining conservative dominance in the United States by highlighting why some voters are deeply conservative, while others are committed liberals. Scholars have offered various explanations, yet consistently these theories focus on individual attributes. Suggestions include personalities attracted to social domination; psychological preferences for order; differences in core values; and differences in moral intuitions. I’m sympathetic to the insights offered as a way to understand individuals, even as I remain skeptical of the larger project of explaining conservatism in individualistic terms. If in 1964 almost two out of three whites voted for a politician who embodied New Deal liberalism, in 2012 almost the same proportion of whites supported a candidate hostile to activist government. Or again, whereas since 1964 in every election a majority of whites has voted for the GOP, only rarely have more than one in ten African Americans done so. Are whites fundamentally different people now than they were in the 1960s? Is there a different distribution of personality types, psychological preferences, values, and moral intuitions among whites and blacks? Rather, it seems likely the principal explanations for conservatism today must be located in history, culture, and context. Yes, there are intriguing differences between individuals that help explain why some embrace and others repudiate dog whistle politics. But more important to understanding this phenomenon is the 50-year trajectory of dog whistle racism in US society.

From the margins

The peripheral rather than core character of conservatism in American society is made clear by returning to the 1960s to track old-time hostility to liberal government. This is important not simply as a historical exercise, but to understand exactly how anti-New Deal politics eventually went from marginal to mainstream.

The John Birch Society

The John Birch Society illustrates the extremism that once marked anti-liberal politics. This rabidly anti-communist group promulgated outlandish conspiracy theories, claiming for instance that the federal mandate to put fluoride in drinking water was part of a nefarious plot to brainwash the country. With these sorts of ideas, the John Birch Society certainly seems like a worthy candidate for the dustbin of history, and so it would be, except that many of its views constitute today’s normal politics.

Massachusetts candy manufacturer Robert Welch, powerful in business circles as a former director of the National Association of Manufacturers, founded the John Birch Society in 1958 to combat “communism” in American life. In a context in which actual domestic support for communism was virtually nil, “communism” in rightwing discourse primarily functioned as a hyperbolic catchall for everything that was supposedly wrong with a political establishment that had embraced the New Deal.

Unsurprisingly, the 1964 election of Lyndon Johnson and his push to enact Great Society programs unhinged Welch and his JBS cohort, causing consternation bordering on apoplexy. In 1966, Welch published an essay entitled “The Truth in Time” to lay bare once and for all the depths of the conniving plot against a slumbering United States. Weaving together historical fact, paranoid fiction, and end-times phraseology, Welch began his essay with “the Illuminati,” a secret society of would-be world rulers who supposedly fomented the French Revolution and eventually “coalesced into the Communist conspiracy as we know it today.” Arriving at the present nearly out of breath, both from the exertion of fabricating history and from the near-hysteria induced by his tale, Welch warned that “the one great job left for the Communists is the subjugation of the people of the United States.” Welch then cataloged their dastardly “methods,” and these capture the central themes of reactionary politics that have since emerged:

the constant indoctrination of young and old alike, through our educational system, and through our communications and entertainment media, in a preference for “welfare” and “security” against responsibility and opportunity; making an ever larger and larger percentage of American industry, commerce, agriculture, education, and individuals accustomed to receiving, and dependent on, government checks; a constant increase in legislation, taxation, and bureaucracy, leading directly towards one hundred percent government; . . . the creation of riots and the semblance of revolution under the guise and excuse of promoting “civil rights”; . . . [and] destroying the power of local police forces to preserve law and order.

The whole thing might be laughable, though it also provides a good description of the hobgoblins conjured by the right today. First, there’s the supposed moral breakdown caused by the siren call of welfare and government-guaranteed economic security. No doubt Welch would have applauded Mitt Romney’s dismissal of 47 percent of the country as “dependent upon government, who believe that they are victims, who believe the government has a responsibility to care for them [and who will never assume] personal responsibility and care for their lives.” Next, Welch shuddered at the prospect of a government-run economy, a specter repeatedly raised in the contemporary opposition to health care reform. Also, Welch bemoaned the collapse of “law and order,” thus anticipating decades of racial pandering conducted in promises to get tough on crime. Finally, Welch saw a special menace from nonwhites, evidenced in his fear of riots and revolution under the excuse of promoting civil rights. Welch fiercely opposed integration, and his racial fears were widely shared in the Birch Society. Today, of course, racial panic continues to rip through the right.

Heralding the “everything old is new again” politics of the right fringe, in 2007 the rightwing media personality Glenn Beck interviewed a JBS spokesperson, interjecting in the midst the conversation: “when I was growing up, the John Birch Society—I thought they were a bunch of nuts. But now . . . you guys are starting to make more and more sense to me.” Maybe this comment says more about the older Beck, who today is not especially known for his sanity. But it also reflects a core truth: in the 1960s, Birch Society discourse struck almost everyone—even the young Beck—as crazy talk. More than extreme, Welch’s half-baked intellectualism made conservative ideas risible, fodder for a good guffaw but not the sort of stuff that anyone beyond the fringes of American politics would believe.

Understanding this, in 1965 conservative movement builder William F. Buckley tried to clear space for a more serious conservatism by denouncing Welch’s views as “far removed from reality and common sense.” Buckley recognized the larger problem. In the battle of ideas about how best to organize society, the right had not only lost, it had no tenable arguments whatsoever. The intellectual and political class as a whole broadly agreed on the need to foster a system in which the government ensured that free enterprise served the overarching interests of democracy. While Republicans and Democrats disagreed on how best to structure market rules and social welfare provisions, there was nevertheless wide consensus regarding the probity of regulated capitalism. The John Birch Society, or the repudiated politics of candidates such as Barry Goldwater, simply provided no credible counterweight to this consensus. How would conservatives develop it?

The Powell Memorandum and the rise of conservative think tanks

As the 1960s came to a close, the right increasingly recognized the lack of credibility around conservatism. In the summer of 1971, the Chamber of Commerce asked Lewis Powell, former head of the American Bar Association and a prominent corporate lawyer from Virginia, to diagnose the anemic character of conservatism. Powell is better known today as a Supreme Court justice, for later that year Nixon appointed him to the Court, partly using the elevation of this Southern lawyer to signal the administration’s opposition to civil rights. Of more immediate concern here, though, is the memorandum Powell prepared for the Chamber of Commerce outlining what he perceived as the challenges to the “free enterprise system,” and how it might be saved.

Powell’s memorandum condemned assaults on business by the predictable rabble: “Communists, New Leftists, and other revolutionaries who would destroy the entire system, both political and economic.” More worrisome for Powell, though, was his sense that these attacks were supported by “perfectly respectable elements of society: from the college campus, the pulpit, the media, the intellectual and literary journals, the arts and sciences, and from politicians.” Powell also sharply criticized American business for its “apathy and default,” and was bewildered by the “extent to which the enterprise system tolerates, if not participates in, its own destruction.” Powell thought he saw a pusillanimous mentality among corporate leaders. “The painfully sad truth is that business, including the boards of directors’ and the top executives of corporations great and small and business organizations at all levels, often have responded—if at all—by appeasement, ineptitude and ignoring the problem.”

Rallying his team with a last-down pep talk, Powell proposed vigorous and concerted corporate mobilization to fund and support an army of national organizations capable of generating conservative ideas and also of inserting them into the national conversation. Powell had in mind existing institutions, though he also urged the creation of new front groups. These organizations should make special efforts, Powell advised, in penetrating the major idea-generating sectors of American society: higher education, especially the social sciences; the media, especially television; and the court system. As to their methods, Powell advised creating stables of “scholars” who could generate material supporting free enterprise, and assembling corps of “speakers of the highest competency,” ever ready to take to the airwaves. In the battle over the future of America, Powell advised corporations to manufacture their own beholden intelligentsia.

Powell’s memorandum almost immediately came to fruition. “Strident, melodramatic, and alarmist,” the historian Kim Phillips-Fein reports, the memorandum “struck a nerve in the tense political world of the early 1970s, giving voice to sentiments that, no matter how extreme they might have seemed, were coming to sound like commonsense in the business world during those anxious years. Not all businessmen shared Powell’s passions. But those who did began to act as a vanguard, organizing the giants of American industry.” The Powell memorandum inspired a flush of donations to already-established institutions, like the Chamber of Commerce and the American Enterprise Institute, and also encouraged the creation and funding of a raft of conservative think tanks, most notably the Heritage Foundation, the Manhattan Institute, and the Cato Institute. As one example, according to a study of the radical right’s origins, one strong Birch Society backer, Joseph Coors, the president of Coors Brewing Company, “poured millions of dollars into dozens of evangelical and New Right organizations and established a pattern for corporate funders: the Scaife, Smith Richardson, Olin, and Noble foundations; the Kraft, Nabisco, and Amway corporations, to name just a few.” After the early 1970s, money that had once funded fringe conspiracy theories now flowed into more respectable “think tanks.”

At the time, existing think tanks offered non-partisan venues for research and policy debates, and their general reputation was positive. Looking for ways to produce and package conservative ideas, the think tank form and name served reactionary forces well: they would concoct their own research and stage their own debates under the umbrella of “think tanks,” with their reassuring connotation of thoughtful neutrality. But rather than fostering wide-ranging inquiry, these new institutions were designed to pump out consistent messages supporting the priorities of their financial backers. In the world of conservative think tanks, apostasy became a firing offense for individuals, and also sufficient cause to defund organizations. In one recent example, the prominent conservative David Frum saw his salary vanish at the American Enterprise Institute after he criticized Congressional Republicans for vilifying Obama’s health care bill. As one critic quipped about these conservative think tanks, “they don’t think; they justify.”

Achieving mainstream legitimacy

Rightwing think tanks found a perfect ally in Ronald Reagan, who combined an eminently likeable demeanor with a pitiless view of the poor and an ideologue’s fervor for repealing the New Deal. In 1980, ten days after Reagan won the presidency, the Heritage Foundation issued a 3,000-page, 20-volume report entitled Mandate for Leadership, specially written to serve as “a blueprint for the construction of a conservative government.” The new president distributed a version to every member of his cabinet, and by Heritage’s estimate implemented two-thirds of its recommendations in the first year of his administration. Reagan also spoke glowingly of AEI, arguing that “today, the most important American scholarship comes out of our think tanks—and none has been more influential than the American Enterprise Institute.” Reagan at once wrapped himself in the legitimacy of the new think tanks and simultaneously bolstered that very legitimacy, helping them launder propaganda into “the most important American scholarship.” He did this not only by extolling their work, but also by adopting their agenda as his own.

Following Mandate for Leadership’s main goal for the Reagan presidency, the administration moved aggressively to reduce taxes for the rich. Reagan slashed rates for corporations and individuals in the highest income brackets, with the cuts enacted in 1981 alone showering $164 billion on the corporate sector, at that point the most generous business tax reduction in the history of the nation. Over the course of his presidency, Reagan lowered the top marginal tax rate on individuals from 70 percent to 28 percent. As Hedrick Smith notes in Who Stole the American Dream?, “The windfall from his tax cuts for America’s wealthiest 1 percent was massive—roughly $1 trillion in the 1980s and another $1 trillion each decade after that. The Forbes 400 Richest Americans, enriched by the Reagan tax cuts, tripled their net worth from 1978 to 1990.” Under Reagan’s tax policies, the process of transferring wealth from the poor and the middle class to the rich and especially to the super-rich began with a vengeance.

What convinced voters to rally behind Reagan’s tax giveaways to the rich? More than anything else, it was Heritage’s second principal goal that helped Reagan sell his tax cuts: gutting welfare. Limiting welfare had long been part of the plutocratic agenda, as cutting government spending on social services promised to reduce tax demands on the wealthy. Aided by dog whistle politics, however, curtailing welfare emerged as more than a goal; it also became a means of mobilizing a broad hostility toward government itself. This hostility in turn helped sell tax cuts: even if the cuts did not directly benefit the middle class, they nevertheless provided a means to lash out against the reviled liberal state.

Liberty, welfare and integration

We can explain shifting perceptions of welfare in the twentieth century through three conceptions of liberty. The first is “liberty from government.” This libertarian version stresses freedom from state coercion, and, more generally, negative freedom from external constraints. During the robber baron era of the late nineteenth and early twentieth centuries, the so-called “malefactors of great wealth” easily manipulated this conception of liberty to support their own agenda. These plutocrats, many having made their fortunes through government contracts and state-backed monopolies, cynically celebrated “rugged individualism” for the little guy, preaching that the freest man was the one solely responsible for himself. These sorts of arguments were mobilized to oppose unions, workplace safety rules, minimum wage laws, and financial support for the unemployed, the injured or disabled, and the elderly. Despite the rhetoric, however, there was little liberty in penury. During the Great Depression it became brutally apparent that genuine freedom depended on security in the face of market vicissitudes. The “rugged individual” shriveled up and blew away in the fierce winds of the Dust Bowl.

The negative conception of “liberty from” was thereafter supplemented by a positive version of “liberty through government.” Under this New Deal version, government gave individuals the realistic power to make their own choices by tempering market abuses and liberating citizens from the dire constraints of need. In his last Sunday speech before his assassination, Martin Luther King, Jr., told the audience: “We hold these truths to be self-evident . . . that all men are endowed with the inalienable rights of life, liberty, and the pursuit of happiness.” Then he continued: “But if a man does not have a job or income, he has neither life or liberty nor the possibility of the pursuit of happiness. He merely exists.” Positive liberty sees freedom not in the abstract, but in the concrete options realistically open to citizens. Thus, rather than seeing government as an enemy of liberty, New Deal liberalism came to see government as key to promoting liberty. The modern liberalism that arose with the New Deal still restricts government infringements on liberty in some areas, such as speech. But more fundamentally it promotes positive liberties by empowering government in other areas, for example in regulating the market and providing help to the needy.

A broad consensus arose around liberty through government; it suffered during the 1960s, however, as hostility to civil rights and integration increased. A new conception of liberty began to emerge: “freedom to exclude.” Both earlier concepts of liberty had underlying racial subtexts, being largely restricted to whites. But freedom to exclude had an explicit racial message: it meant the liberty to exclude nonwhites from white neighborhoods, workplaces, and schools.

When Lyndon Johnson declared his War on Poverty, he extended the benefits of social welfare to nonwhites. In the process, this effort targeted segregation, for obviously poverty in nonwhite communities was deeply tied to racially closed workplaces, schools, and housing. As a result, welfare and integration became tightly linked, and hostility toward integration morphed into opposition to welfare. “The positive liberties [that the War on Poverty] extended to African Americans,” notes Jill Quadagno, a scholar of race and welfare, “were viewed by the working class as infringement on their negative liberties, the liberty for trade unions to discriminate in the selection of apprentices and to control job training programs; the liberty to exclude minorities from representation in local politics; the liberty to maintain segregated neighborhoods.” To talk of rank racial discrimination in unions, politics, and housing in the language of a perceived infringement on liberty may seem strange. Yet for many, this is how they experienced integration. It was a social experiment being forced on them by government, and therefore a governmental infringement on their liberty to exclude.

Reagan’s campaign against welfare helped make the case for tax cuts by successfully using social programs like welfare, and its implicit connection to integration, to convince voters that the real danger in their lives came from a looming, intrusive government. This idea that government was the primordial threat would have seemed silly in the decades immediately following the bitter experience of the Great Depression. But decades removed from that hardship— and after many whites had risen into the comfortable ranks of the middle class— government impingements on personal liberty came to seem the greater threat to the well-being of many. Like the earlier concept of liberty from government, freedom to exclude presented government as the problem, and thus, provided grounds for opposing liberalism and its vision of liberty through government. The rugged individual, hostile to government regulation of the market, died in the Great Depression; but after the civil rights movement, he rose from the grave as the “traditional individual,” resentful of government efforts to force unwanted racial integration. Both figures, convinced that government rather than concentrated wealth posed the greatest threat to their vaunted liberty, proved willing to support the robber barons of their day.

Ironically, the very structure of New Deal aid facilitated the demonization of the activist state. Responding to the individualistic strain in American culture, New Dealers and their heirs purposefully sought to hide from many beneficiaries how government helped them. From the outset, for instance, Social Security’s architects told recipients that these were “earned” benefits, rather than the stigmatized “welfare” given to the penurious. Similarly, many other wealth transfer programs have been structured as tax breaks, again cloaking the role of the activist state. In the historian Molly Michelmore’s evocative terms, the liberal reform agenda “enabled and encouraged the majority of citizens to define themselves as taxpayers with legitimate claims on the state not shared by tax eaters on the welfare rolls.” Liberals obfuscated the assistance provided by government—a calculated decision aimed at reducing opposition from a public steeped in norms of individual responsibility, though also communitarian values. The dissimulating design of the liberal state, perversely, eased the task of conservatives keen on stoking hostility toward liberal governance. Even if apocryphal, the oxymoronic Tea Party cry “Keep Government out of my Medicare!” epitomizes how anti-government sentiment can be mobilized more easily when the public fails to discern government’s helping hand.

During the Reagan era, for the first time since the onset of the Great Depression, significant cultural space opened up to present government—rather than concentrated wealth—as the greatest threat to freedom faced by the middle class. In turn, massive tax cuts were sold as the appropriate way to restrain a looming, intrusive state. On one level, of course, the tax revolt of the 1980s was more precisely targeted towards preventing the transfer of resources to “them,” the “undeserving poor,” who were disproportionately people of color. More than this, though, opposition to taxes came to mean opposition to government meddling. The point is not that Reagan or other Republican administrations have reduced the size of government (on the contrary, they’ve repeatedly vastly expanded federal power and dramatically increased the national debt, not least through unsustainable tax giveaways to the rich). The point, rather, is that they sold tax cuts for the rich, and indeed the whole agenda of reduced regulation and slashed services, as an expression of hostility toward liberal government. The anti-tax insurgent Grover Norquist has been widely quoted as saying: “I’m not in favor of abolishing the government. I just want to shrink it down to the size where we can drown it in the bathtub.” But what makes many voters sympathetic to the idea of extinguishing government in the first place? For many, this seething hostility toward government is rooted in racial narratives of freedom in jeopardy.

Affirmative action

At the urging of the Heritage Foundation, the Reagan administration also used—indeed, created—affirmative action as a wedge issue. Affirmative action emerged in the late 1960s out of efforts to directly foster integration in schools and workplaces, and while often the object of resentment, until the 1980s such programs nevertheless enjoyed broad support from a polity generally committed to fulfilling the civil rights goal of breaking down segregation. Reagan set out to not only roll back but politicize affirmative action, and to spearhead this effort he appointed William Bradford Reynolds to head the Civil Rights Division of the Justice Department. Reynolds, an Andover- and Yale-educated corporate lawyer, had no background in civil rights; instead, he was a fierce critic of affirmative action, which he saw as racial discrimination against innocent whites. Under Reynolds, the Justice Department began highly public campaigns to oppose affirmative action, presenting numerous arguments to the Supreme Court that race-conscious remedies amounted to impermissible racism against whites. It also sought to intervene in school desegregation cases, encouraging local school districts to contest court-ordered integration plans. The administration defended segregated school districts so aggressively, it caused Drew Days, who had headed the Department of Justice’s civil rights efforts under Carter, to despair, “What they seek is no less than a relitigation of Brown v. Board of Education.”

Like Reagan’s campaign against welfare, his broadsides against affirmative action constituted a form of dog whistle politics. The ostensible issue wasn’t minorities at all, but the supposedly simple principle of not discriminating for or against any individual. In 1984, when Reagan won re-election in a landslide, the GOP platform had a new plank on affirmative action: “We will resist efforts to replace equal rights with discriminatory quota systems and preferential treatment. Quotas are the most insidious form of discrimination: reverse discrimination against the innocent.” The document said nothing about race directly, but obviously “the innocent” meant innocent whites. Attacking affirmative action provided a way for the GOP to constantly force race—and the party’s defense of white interests—into the national conversation.

Beyond generally pushing the idea of whites as victims, attacking affirmative action had a more particular payoff in how this issue intersected with class. The constant harping on welfare directed attention to nonwhites defined overwhelmingly as poor and dysfunctional. This pernicious imagery was challenged, though, by the growing number of nonwhites attending top schools, holding good jobs, and living in nice neighborhoods. Attacking affirmative action provided a way to paint even successful minorities as still representing a threat to whites, by portraying these minorities as “thriving in jobs that they had obtained, not through hard work or merit, but through affirmative action—jobs that under any fair system of competition would have rightfully gone to whites.” Closely related to this, the charge that liberalism gave elite minorities an unfair advantage created a racial spook with which to directly rattle those whites whose wealth typically shielded them from contact with the poor of any color. Railing against affirmative action provided a way to tell well-off whites that even they were at risk from the liberal obsession with integration: their jobs, and also their children’s access to top colleges, were under assault from do-gooder liberals.

In 1984, Reagan easily won re-election, capturing the white vote by a factor of almost two to one. Blacks heard the dog whistle too. Over 90 percent voted against Reagan—not that it mattered to the Republicans, for as Kevin Phillips had noted, with the support of enough whites the Republicans could win with virtually no African American support.

Excerpted from “Dog Whistle Politics: How Coded Racial Appeals Have Reinvented Racism and Wrecked the Middle Class,” by Ian Haney-López. Copyright © 2014 by Ian Haney López. Reprinted by arrangement with Oxford University Press, a division of Oxford University. All rights reserved.