As technologists frequently remind us, the singularity, a time when the realization of smarter-than-human computers irrevocably alters our future, is nearer every day. Futurists take this prospect very seriously. They gather to discuss what it means at the annual Singularity Summit, a meeting hosted by the Singularity University, dedicated to exploring the “disruptive implications and opportunities” of the evolution of artificial technology. But, crucially, most of those doing the exploring are men.

We don’t have to wait for data-like robots to think about how discriminatory norms manifest themselves through technology. Google Instant’s predictive search capability, which saves users 2-5 seconds by making the most likely suggestions “based on popular queries typed by other users,” is a good illustration. Earlier this year, a study conducted by Lancaster University concluded that Google Instant’s autocomplete function creates an echo chamber for negative stereotypes regarding race, ethnicity and gender. When you type the words, “Are women …” into Google it predicts you want one of the following: “… a minority,” “… evil,” “… allowed in combat,” or, last but not least, “… attracted to money.” A similar anecdotal exercise by BuzzFeed’s Alanna Okun concluded that anyone curious about women would end up with the impression that they are “crazy, money-grubbing, submissive, unfunny, beautiful, ugly, smart, stupid, and physically ill-equipped to do most things. And please, whatever you do, don’t offer them equality.” In effect, algorithms learn negative stereotypes and then teach them to people who consume and use the information uncritically.

As with Search, Google’s predictive targeted advertising algorithms use aggregated user results to make what appear to be sexist assumptions based on gender, for example, inferring based on a woman’s search and interests that she was a man because she was interested in technology and computers. Likewise, Facebook’s advertising processes can yield strangely prudish results. Earlier this year, Facebook declined to run a women’s healthcare ad featuring a woman holding her breast for a self-examination (no nipple apparent even). Last year, Apple changed the name of Naomi Wolf’s book “Vagina” to “V****a” in iTunes. Have yet to find any P***ses.

Results like these have real effects on our perception of women. In the case of the Facebook ad it is possible that even the presence of a woman’s breast (even without a visible areola) raised a red flag for obscenity in violation of the company’s community guidelines. But Facebook bans images of women’s nipples on the site – regardless of whether they appear in art, political protest or breast-feeding. The effective result, even if it’s not the intent, is the conflation of all female nudity with pornography and the denial of women’s agency in defining for themselves how their bodies should be used, perceived and represented. This is a conservative strike against female freedom of speech, as an expression of agency.

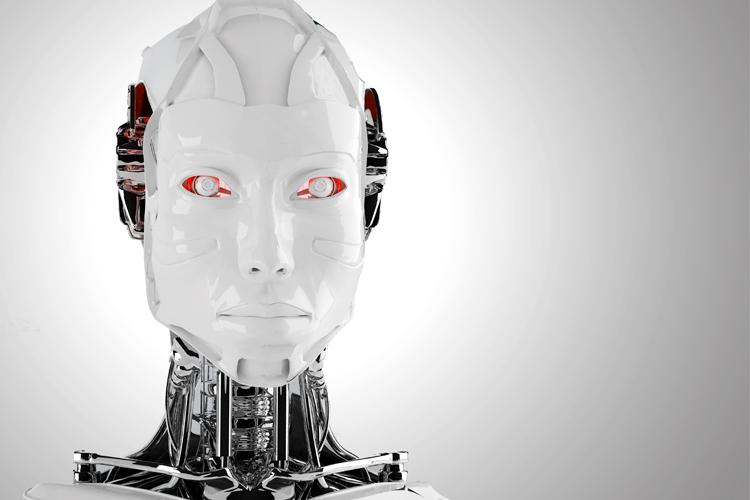

Agency is an important concept in considerations of humanity. One that, in terms of artificial intelligence, turns out to be highly gendered as well. Elegantly anthropomorphized robots are on the horizon. Ask almost any scientist involved in artificial intelligence and they will tell you that in order to make robots socially acceptable they need to be humanlike. Which means, most likely, they will have gender. Last year, scientists at Bielefeld University published a study in the Journal of Applied Social Psychology that might have profound implications. They found that human users thought of “male” robots as having agency — being able to exercise control over their environments. On the other hand, female robots were perceived as having communal personality traits — being more focused on others than on themselves. Believing that male robots have agency could turn into a belief that, when we employ them, they should have agency and autonomy. “Females,” not so much. In essence, male robot’s are Misters, female robots are Mrs. and Misses.

The Bielefeld University researchers also found that people relied significantly on hair length to assign a gender to a robot. The longer the robot’s hair was, the more likely people were to think of it as female. And once gender was effectively assigned by the participants in the study, it colored their choices of what the robot should do. “Male” robots were considered better choices for technical jobs, like repairing devices, and “female” robots were thought to be “better” at stereotypical household chores.

What do gendered stereotypes in robots look like? Robotic natural language capabilities and voices substantively affect human interactions. Consider Siri today. The latest iPhone release will give users in the U.S. the option of choosing a male or female voice. Apple provided no reasons for why prior versions of Siri were female in the U.S., but male in the U.K. Having voice options may sound like a step toward gender equality, allowing people to think of assistants as either male or female. However, there may have been another reason for making this product choice — namely, while people are more likely to like female voices, they actually trust male voices more and are more likely to think of them as intelligent and authoritative.

Siri’s purported sexism was not only a matter of voice selection, but of content. It seemed as though sexist biases were embedded in the functionality. Answers were markedly skewed in favor of meeting the needs of straight, male users. Siri couldn’t answer basic questions about female-centered oral sex, contraception and health.

At the 2008 Singularity Summit, Marshall Brain, author of “Robotic Nation,” described the predictable, potentially devastating effects of “second intelligence” competition in the marketplace. The service industry will be the first affected. Brain describes a future McDonald’s staffed by attractive female robots who know everything about him and can meet his every fast food need. In his assessment, an attractive, compliant, “I’ll get you everything you want before you even think about it” female automaton is “going to be a good thing.” However, he went on to talk about job losses in many sectors, especially the lowest paying, with emphasis on service, construction and transportation sectors. Brain noted that robotic competition wouldn’t be good for “construction workers, truck drivers and Joe the plumber.” Nine out of 10 women are employed in service industries. The idea that women will be disproportionately displaced as a result of long-standing sex segregation in the workforce did not factor into his analysis.

I don’t mean to pick on Brain, but the fact that male human experiences and expectations and concerns are normative in the tech industry and at the Singularity Summit is clear. The tech industry is not known for its profound understanding of gender or for producing products optimized to meet the needs of women (whom the patriarchy has cast as “second intelligence” humans). Rather, the industry is an example of a de facto sex-segregated environment, in which, according to sociologist Philip Cohen, “men’s simple assumption that women don’t really exist as people” is reinforced and replicated. Artificial intelligence is being developed by people who benefit from socially dominant norms and little vested personal incentive to challenge them.

The Bielefeld Researchers concluded that robots could be positively constructed as “counter-stereotypical machines” that could usefully erode rigid ideas of “male” and “female” work. However, the male-dominated tech sector may have little interest in countering prevailing ideas about gender, work, intelligence and autonomy. Robotic anthropomorphism is highly likely to result in robotic androcentrism.

Singularity University, whose mission is to challenge experts “to use transformative, exponential technologies to address humanity’s greatest challenges,” has 22 core faculty on staff, three of whom are women. The rest, with the exception of maybe one, appear to be white men. This ratio does not suggest an appreciation of the fact that one of humanity’s greatest challenges right now is misogyny.