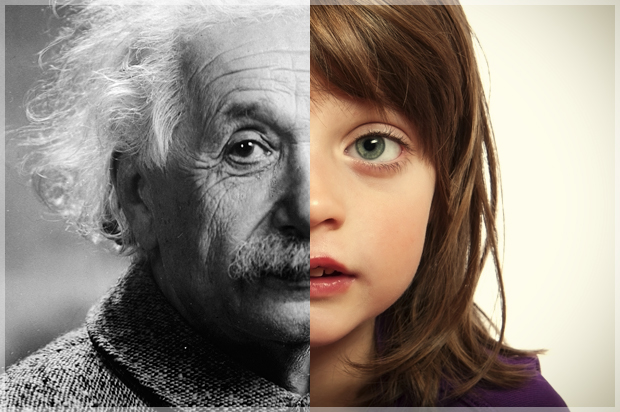

What happens to our child’s brain when we parent?

The discovery of the nature and extent of brain plasticity has led to a tremendous advance in our understanding of what happens to the brain during the learning process—and to an explosion of products claiming to trigger and enhance brain plasticity in developing children. Many products tout harnessing brain plasticity as a key benefit, and the notion that parents can build a designer brain smarter than anyone else’s by using these products is certainly appealing. But what is “plasticity” and what should parents actually do to harness this aspect of brain development in their children?

Plasticity is the brain’s lifelong capacity to form new synapses, connections between nerve cells, and even new neural pathways, making and strengthening connections so that learning accelerates and the ability to access and apply what has been learned becomes more and more efficient.

Developmental studies of plasticity trace the modification of brain architecture and brain wiring when it is exposed to novel situations. In this case, brain “wiring” means axon connections among brain regions and the activities those brain regions carry out. Rather like an architect drawing a wiring diagram for your home, indicating where the wires will go for the stove, refrigerator, air-conditioning, and so on, researchers have been drawing a wiring diagram for the brain. In the process they have established that the brain cortex is not a fixed entity but is being continuously modified by learning. It turns out that the “wires” in the cortex are constantly reconnecting and will continue to do so based on input from the outside world.

Let’s take a look at what happens with brain plasticity when a child first learns to read. Initially, no part of the brain is wired specifically for reading. As the child learns to read, more and more brain cells and nerve circuits are recruited to the task as the child becomes a more proficient reader. The brain uses plasticity when a child begins to recognize words and comprehend what he is reading. The spoken word “ball,” which the child already comprehends, now becomes associated with the letters B‑A‑L‑L. In this way, learning to read is a form of neural plasticity.

The discovery that a developing brain can be wired to recognize letters and other amazing discoveries about neural plasticity are often translated into commercial products touting enhanced “brain fitness” as a benefit. But just because a scientific experiment shows that a particular activity triggers brain plasticity doesn’t mean that this particular activity—such as seeing letters on a computer screen—is required to achieve the effect, nor does it mean that the activity is the only means of generating plasticity. Computer-based letter recognition drills do indeed activate and train symbol recognition centers in the visual cortex using brain plasticity. But so does sitting down and reading a book with your child. This parent-child interactive approach is called “dialogic reading.” But computer screens and apps only train the brain to recognize the letters (not to understand the meaning of words the letters represent). In contrast, dialogic reading—intuitive and interactive—naturally harnesses neural plasticity to make axon connections between the letter recognition centers to the language and thinking centers in the brain.

Professor Paul Yoder at Vanderbilt University and I described how children learn to understand spoken language in a paper published in the International Journal of Developmental Neuroscience. We showed that typically developing children learn to discriminate speech sounds quite efficiently with or without the benefit of exposure to special speech discrimination exercises or computer games. These speech discrimination games are marketed as specially engaging neural plasticity and were developed by leading neuroscientists. In fact, children whose brains were never exposed to speech discrimination drills or computer games end up with a perfectly organized—and facile—auditory cortex arising from natural speech input from their parents and others. And these children learn to talk, to read, and to think very well indeed. We also argued that unnatural auditory input—in this case, isolated auditory signals—would not result in the proper wiring or integration of that input with other brain regions, such as the language centers, that are necessary to distinguish—and use—speech in the real world.

Never forget that the brain will learn what it is taught. Brain science over the past decade has yielded a profound insight into neural plasticity: A developing brain will learn what it is taught. If computer software trains the brain to discriminate between little bits of sound, exactly that skill—recognizing bits of sound—is what the brain will learn and become wired to do. But this training will not generalize to the brain’s ability to understand what someone else is saying—or to learn to read, because discriminating sounds is only a small fragment of what the brain has to do to comprehend spoken language—and to read. If you want the brain to become wired for spoken language and for reading, then the input has to be real, functional, spoken language and dialogic reading. Breaking the speech signal into its component parts and teaching these via computer simply will not do the trick. And if you want the spoken language to serve as a tool for social communication with other human beings, the input has to occur in the context of human social interaction, which involves even more areas of the brain than the area dedicated to speech discrimination.

When a child reaches for a hat lying on the floor, and her dad says “hat,” this parent-child interaction triggers neural plasticity in the baby’s brain. The child’s brain is not only processing the phonic components of the word “hat,” (h-a-t) it is processing the visual image of the hat, the social context (playing with her dad), and the actual meaning of the word—a real hat. When dad intuitively puts the hat first on his head and then on her head, the speech sounds in the word “hat” are then associated (“paired”) with the perceptual properties of the hat as well as its function (covering the head). Later on, the child and her dad may read a book together and see a photograph of a bat (in this case, the flying mammal). Now the child hears her dad say “bat,” which of course is phonetically different than “hat.” But she is perceiving not only a difference in the speech sounds “h” and “b” but also the perceptual features of a bat and a hat, the social context in which the objects are encountered, and the functions of the objects.

It is not surprising that simply teaching discrimination between “h” and “b” using flashcards or a computer program cannot possibly convey the information the child will need to comprehend the difference between “hat” and “bat” in the real world. Intuitive parenting automatically teaches all these elements simultaneously; it is automatically a multisensory approach. Touching, seeing, speaking, and listening are all providing context—in a safe, nurturing environment—and multiple brain areas are activated and integrated via neural plasticity.

Adapted from “The Intuitive Parent: Why the Best Thing for Your Child Is You” by Stephen Camarata, PhD with permission of Current, an imprint of Penguin Publishing Group, a division of Penguin Random House LLC. Copyright © Stephen Camarata, 2015.