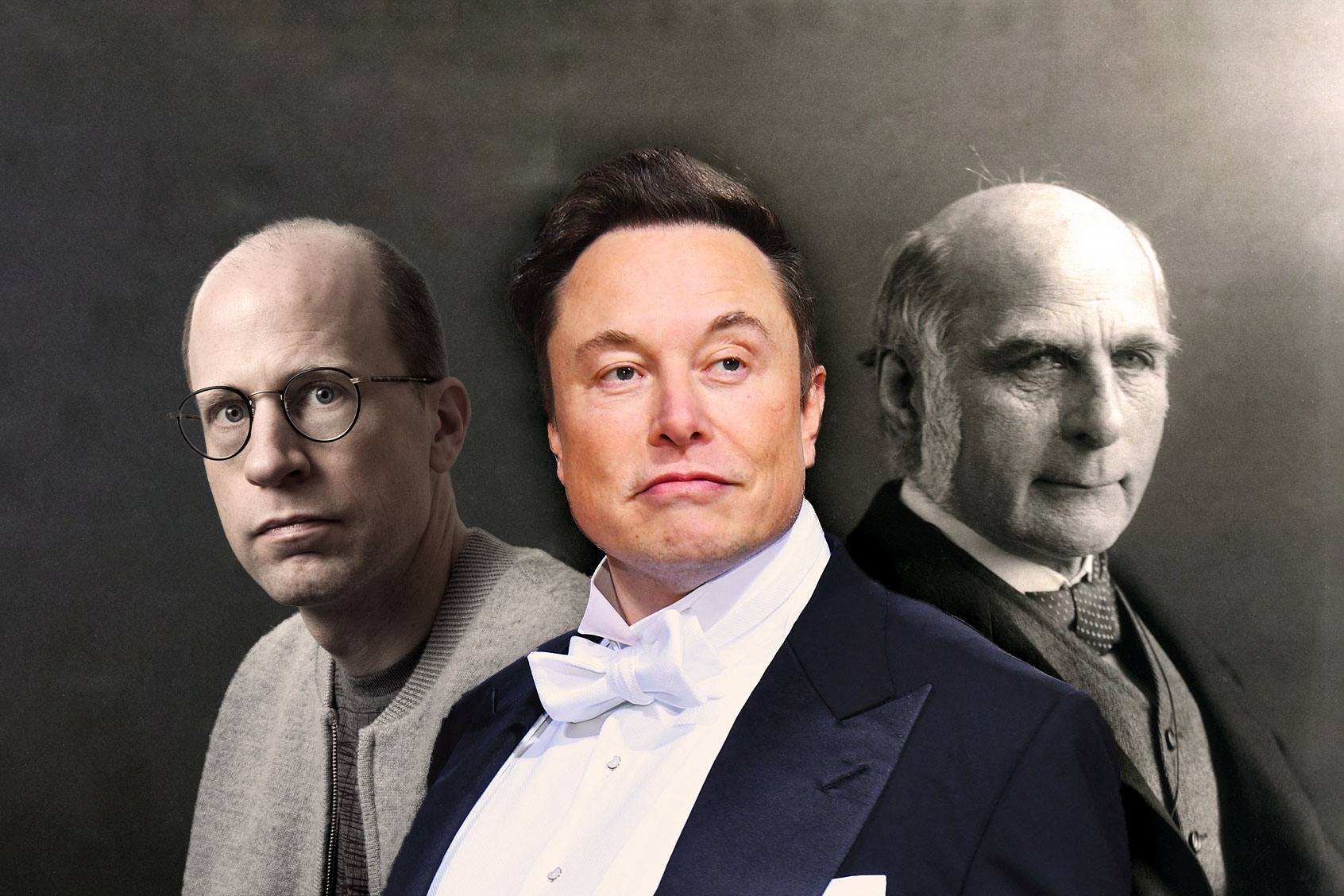

Perhaps you’ve seen the word “longtermism” in your social media feed. Or you’ve stumbled upon the New Yorker profile of William MacAskill, the public face of longtermism. Or read MacAskill’s recent opinion essay in the New York Times. Or seen the cover story in TIME magazine: “How to Do More Good.” Or noticed that Elon Musk retweeted a link to MacAskill’s new book, “What We Owe the Future,” with the comment, “Worth reading. This is a close match for my philosophy.”

As I have previously written, longtermism is arguably the most influential ideology that few members of the general public have ever heard about. Longtermists have directly influenced reports from the secretary-general of the United Nations; a longtermist is currently running the RAND Corporation; they have the ears of billionaires like Musk; and the so-called Effective Altruism community, which gave rise to the longtermist ideology, has a mind-boggling $46.1 billion in committed funding. Longtermism is everywhere behind the scenes — it has a huge following in the tech sector — and champions of this view are increasingly pulling the strings of both major world governments and the business elite.

But what is longtermism? I have tried to answer that in other articles, and will continue to do so in future ones. A brief description here will have to suffice: Longtermism is a quasi-religious worldview, influenced by transhumanism and utilitarian ethics, which asserts that there could be so many digital people living in vast computer simulations millions or billions of years in the future that one of our most important moral obligations today is to take actions that ensure as many of these digital people come into existence as possible.

In practical terms, that means we must do whatever it takes to survive long enough to colonize space, convert planets into giant computer simulations and create unfathomable numbers of simulated beings. How many simulated beings could there be? According to Nick Bostrom —the Father of longtermism and director of the Future of Humanity Institute — there could be at least 1058 digital people in the future, or a 1 followed by 58 zeros. Others have put forward similar estimates, although as Bostrom wrote in 2003, “what matters … is not the exact numbers but the fact that they are huge.”

In this article, however, I don’t want to focus on how bizarre and dangerous this ideology is and could be. Instead, I think it would be useful to take a look at the community out of which longtermism emerged, focusing on the ideas of several individuals who helped shape the worldview that MacAskill and others are now vigorously promoting. The most obvious place to start is with Bostrom, whose publications in the early 2000s — such as his paper “Astronomical Waste,” which was recently retweeted by Musk — planted the seeds that have grown into the kudzu vine crawling over the tech sector, world governments and major media outlets like the New York Times and TIME.

Nick Bostrom is, first of all, one of the most prominent transhumanists of the 21st century so far. Transhumanism is an ideology that sees humanity as a work in progress, as something that we can and should actively reengineer, using advanced technologies like brain implants, which could connect our brains to the Internet, and genetic engineering, which could enable us to create super-smart designer babies. We might also gain immortality through life-extension technologies, and indeed many transhumanists have signed up with Alcor to have their bodies (or just their heads and necks, which is cheaper) frozen after they die so that they can be revived later on, in a hypothetical future where that’s possible. Bostrom himself wears a metal buckle around his ankle with instructions for Alcor to “take custody of his body and maintain it in a giant steel bottle flooded with liquid nitrogen” after he dies.

In a paper co-authored with his colleague at the Future of Humanity Institute, Carl Shulman, Bostrom explored the possibility of engineering human beings with super-high IQs by genetically screening embryos for “desirable” traits, destroying those that lack these traits, and then growing new embryos from stem cells, over and over again. They found that by selecting one embryo out of 10, creating 10 more out of the one selected, and repeating that process 10 times over, scientists could create a radically enhanced person with IQ gains of up to 130 points.

Nick Bostrom has explored the possibility of engineering “radically enhanced” human beings by genetically screening embryos for “desirable” traits, destroying those that lack these traits, and then growing new embryos from stem cells.

This engineered person might be so different from us — so much more intelligent — that we would classify them as a new, superior species: a posthuman. According to Bostrom’s 2020 “Letter From Utopia,” posthumanity could usher in a techno-utopian paradise marked by wonders and happiness beyond our wildest imaginations. Referring to the amount of pleasure that could exist in utopia, the fictional posthuman writing the letter declares: “We have immense silos of it here in Utopia. It pervades all we do, everything we experience. We sprinkle it in our tea.”

Central to the longtermist worldview is the idea of existential risk, introduced by Bostrom in 2002. He originally defined it as any event that would prevent us from creating a posthuman civilization, although a year later he implied that it also includes any event that would prevent us from colonizing space and simulating enormous numbers of people in giant computer simulations (this is the article that Musk retweeted).

More recently, Bostrom redefined the term as anything that would stop humanity from attaining what he calls “technological maturity,” or a condition in which we have fully subjugated the natural world and maximized economic productivity to the limit — the ultimate Baconian and capitalist fever-dreams.

For longtermists, there is nothing worse than succumbing to an existential risk: That would be the ultimate tragedy, since it would keep us from plundering our “cosmic endowment” — resources like stars, planets, asteroids and energy — which many longtermists see as integral to fulfilling our “longterm potential” in the universe.

What sorts of catastrophes would instantiate an existential risk? The obvious ones are nuclear war, global pandemics and runaway climate change. But Bostrom also takes seriously the idea that we already live in a giant computer simulation that could get shut down at any moment (yet another idea that Musk seems to have gotten from Bostrom). Bostrom further lists “dysgenic pressures” as an existential risk, whereby less “intellectually talented” people (those with “lower IQs”) outbreed people with superior intellects.

This is, of course, straight out of the handbook of eugenics, which should be unsurprising: the term “transhumanism” was popularized in the 20th century by Julian Huxley, who from 1959 to 1962 was the president of the British Eugenics Society. In other words, transhumanism is the child of eugenics, an updated version of the belief that we should use science and technology to improve the “human stock.”

It should be clear from this why the “Future of Humanity Institute” sends a shiver up my spine. This institute isn’t just focused on what the future might be like. It’s advocating for a very particular worldview — the longtermist worldview — that it hopes to actualize by influencing world governments and tech billionaires. And to this point, its efforts are paying off.

Robin Hanson is, alongside William MacAskill, a “research associate” at the Future of Humanity Institute. He is also a “men’s rights” advocate who has been involved in transhumanism since the 1990s. In his contribution to the 2008 book “Global Catastrophic Risks,” which was co-edited by Bostrom, he argued that in order to rebuild industrial civilization if it were to collapse, we might need to “retrace the growth path of our human ancestors,” passing from a hunter-gatherer to an agricultural phase, leading up to our current industrial state. How could we do this? One way, he suggested, would be to create refuges — e.g., underground bunkers — that are continually stocked with humans. But not just any humans will do: if we end up in a pre-industrial phase again,

it might make sense to stock a refuge [or bunker] with real hunter-gatherers and subsistence farmers, together with the tools they find useful. Of course such people would need to be disciplined enough to wait peacefully in the refuge until the time to emerge was right. Perhaps such people could be rotated periodically from a well-protected region where they practiced simple lifestyles, so they could keep their skills fresh.

In other words, Hanson’s plan is to take some contemporary hunter-gatherers — whose populations have been decimated by industrial civilization — and stuff them into bunkers with instructions to rebuild industrial civilization in the event that ours collapses. This is, as Audra Mitchell and Aadita Chaudhury write, “a stunning display of white possessive logic.”

Robin Hanson’s big plan is to take people from contemporary hunter-gatherer cultures and stuff them into underground bunkers with instructions to rebuild industrial civilization if ours collapses.

More recently, Hanson became embroiled in controversy after he seemed to advocate for “sex redistribution” along the lines of “income redistribution,” following a domestic terrorist attack carried out by a self-identified “incel.” This resulted in Slate wondering whether Hanson is the “creepiest economist in America.” Not to disappoint, Hanson doubled down, writing a response to Slate’s article titled “Why Economics Is, and Should Be, Creepy.” But this isn’t the most appalling thing Hanson has written or said. Consider another blog post published years earlier entitled “Gentle Silent Rape,” which is just as horrifying as it sounds. Or perhaps the award should go to his shocking assertion that

“the main problem” with the Holocaust was that there weren’t enough Nazis! After all, if there had been six trillion Nazis willing to pay $1 each to make the Holocaust happen, and a mere six million Jews willing to pay $100,000 each to prevent it, the Holocaust would have generated $5.4 trillion worth of consumers surplus [quoted by Bryan Caplan in a debate with Hanson]

Nick Beckstead is, along with MacAskill and Hanson, another research associate at the Future of Humanity Institute. He’s also the CEO of the FTX Foundation, which is largely funded by the crypto-billionaire Sam Bankman-Fried. Previously, Beckstead was a program officer for Open Philanthropy, which in 2016 gave Hanson $290,345 “to analyze potential scenarios in the future development of artificial intelligence.”

Along with Bostrom, Beckstead is credited as one of the founders of longtermism because of his 2013 PhD dissertation titled “On the Overwhelming Importance of Shaping the Far Future,” which longtermist Toby Ord describes as “one of the best texts on existential risk.” Beckstead made the case therein that what matters more than anything else in the present is how our actions will influence the future in the coming “millions, billions, and trillions of years.” How do we manage this? One way is to make sure that no existential risks occur that could foreclose our “vast and glorious” future among the heavens, with trillions of simulated people living in virtual realities. Another is to figure out ways of altering the trajectory of civilization’s development: Even small changes could have ripple effects that, over millions, billions and trillions of years, add up to something significant.

One implication of Beckstead’s view is that, to quote him, since “saving lives in poor countries may have significantly smaller ripple effects than saving and improving lives in rich countries, … it now seems more plausible to me that saving a life in a rich country is substantially more important than saving a life in a poor country, other things being equal.”

Nick Beckstead suggests that “saving a life in a rich country is substantially more important than saving a life in a poor country, other things being equal.”

Why would that be so, exactly? Because “richer countries have substantially more innovation, and their workers are much more economically productive.” This makes good sense within the longtermist worldview. As Hilary Greaves — another research associate next to Hanson and MacAskill — notes in an interview, we intuitively think that transferring wealth from the rich to the poor is the best way to improve the world, but “longtermist lines of thought suggest that something else might be better still,” namely, transferring wealth in the opposite direction.

William MacAskill initially made a name for himself by encouraging young people to work on Wall Street, or for petrochemical companies, so they can earn more money to give to charity. More recently, he’s become the poster boy for longtermism, thanks to his brand new book “What We Owe the Future,” which aims to be something like the Longtermist Bible, laying out the various commandments and creeds of the longtermist religion.

In 2021, MacAskill defended the view that caring about the long term should be the key factor in deciding how to act in the present. When judging the value of our actions, we should not consider their immediate effects, but rather their effects a hundred or even a thousand years from now. Should we help the poor today? Those suffering from the devastating effects of climate change, which disproportionately affects the Global South? No, we must not let our emotions get the best of us: we should instead follow the numbers, and the numbers clearly imply that ensuring the birth of 1045 digital people — this is the number that MacAskill uses — must be our priority.

Although the suffering of 1.3 billion people is very bad, MacAskill would admit, the difference between 1.3 billion and 1045 is so vast that if there’s even a tiny chance that one’s actions will help create these digital people, the expected value of that action could be far greater than the expected value of helping those living and suffering today. Morality, in this view, is all about crunching the numbers; as the longtermist Eliezer Yudkowsky once put it, “Just shut up and multiply.”

In his new book, MacAskill takes a slightly more moderate approach. Focusing on the far future, he now argues, is not the key priority of our time but a key priority. But this move, switching from the definite to the indefinite article, still yields some rather troubling conclusions. For example, MacAskill claims that from a longtermist perspective we should be much more worried about underpopulation than overpopulation, since the more people there are, the more technological “progress” there will be. Trends right now suggest that the global population may begin to decline, which would be a very bad thing, in MacAskill’s view.

Want a daily wrap-up of all the news and commentary Salon has to offer? Subscribe to our morning newsletter, Crash Course.

MacAskill sees an out, however, arguing that we might not need to create more human beings to keep the engines of progress roaring. We could instead “develop artificial general intelligence (AGI) that could replace human workers — including researchers. This would allow us to increase the number of ‘people’ working on R & D as easily as we currently scale up production of the latest iPhone.” After all, these AGI worker-people could be easily duplicated — the same way you might duplicate a Word document — to yield more workers, each toiling away as happy as can be researching and developing new products. MacAskill continues:

Advances in biotechnology could provide another pathway to rebooting growth. If scientists with Einstein-level research abilities were cloned and trained from an early age, or if human beings were genetically engineered to have greater research abilities, this could compensate for having fewer people overall and thereby sustain technological progress.

As Jeremy Flores writes on Twitter, “you can almost see the baby Einsteins in test tubes — complete with mustaches and unkempt gray hair”!

But perhaps MacAskill’s most stunning claim is that the reason we should stop polluting our beautiful planet by burning coal and oil is that we may need these fossil fuels to rebuild our industrial civilization should it collapse. I will let MacAskill explain the idea:

Burning fossil fuels produces a warmer world, which may make civilisational recovery more difficult. But it also might make civilisational recovery more difficult simply by using up a nonrenewable resource that, historically, seemed to be a critical fuel for industrialisation. … Since, historically, the use of fossil fuels is almost an iron law of industrialisation, it is plausible that the depletion of fossil fuels could hobble our attempts to recover from collapse.

In other words, from the longtermist perspective, we shouldn’t burn up all the fossil fuels today because we may need some to burn up later on in order to rebuild, using leftover coal and oil to pass through another Industrial Revolution and eventually restore our current level of technological development. This is an argument MacAskill has made many times before.

From the longtermist perspective, we shouldn’t burn up all the fossil fuels today because we may need to burn them later in order to pass through another Industrial Revolution and eventually restore our current level of technological development.

Just reflect for a moment on the harm that industrialization has caused the planet. We are in the early stages of the sixth major mass extinction in life’s 3.8 billion-year history on Earth. The global population of wild vertebrates — mammals, fish, reptiles, birds, amphibians — declined by an inconceivable 60% between 1970 and 2014. There are huge “dead zones” in our oceans from pollution. Our planet’s climate forecast is marked by mega-droughts, massive wildfires, melting glaciers, sea-level rise, more species extinctions, the collapse of major ecosystems, mass migrations, unprecedented famines, heat waves above the 95-degree wet-bulb threshold of survivability, political instability, social upheaval, economic disruptions, wars and terrorism, and so on. Our industrial civilization itself could collapse because of these environmental disasters. MacAskill argues that if the “Civilization Reset” button is pressed, we should do it all over again.

Why would he argue this? If you recall his earlier claims about 1045 people in vast computer simulations spread throughout the Milky Way, then you’ve answered the question for yourself.

Sam Bankman-Fried is a multi-billionaire longtermist who founded FTX, a cryptocurrency exchange whose CEO is Nick Beckstead. Is cryptocurrency a Ponzi scheme? According to Bankman-Fried’s description of Decentralized Finance (DeFi), it sure sounds like it. He recently claimed that “by number of Ponzi schemes there are way more in crypto, kinda per capita, than in other places,” although he doesn’t see this as especially problematic because “it’s just like a ton of extremely small ones.” Does that make it better, though? As David Pearce, a former colleague of Bostrom, asked last year on a social media post that links to an article about Bankman-Fried’s dealings, “Should effective altruists participate in Ponzi schemes?”

Bankman-Fried has big plans to reshape American politics to fit the longtermist agenda. Earlier this year, he funded the congressional campaign of Carrick Flynn, a longtermist research affiliate at the Future of Humanity Institute whose campaign was managed by Avital Balwit, also at the Future of Humanity Institute. Flynn received “a record-setting $12 million” from Bankman-Fried, who says he might “spend $1 billion or more in the 2024 [presidential] election, which would easily make him the biggest-ever political donor in a single election.” (Flynn lost his campaign for the Democratic nomination in Oregon’s 6th district; that $12 million won him just over 11,000 votes.)

Given Bankman-Fried’s interest in politics, we should expect to see longtermism become increasingly visible in the coming years. Flynn’s campaign to bring longtermism to the U.S. Capitol, although it failed, was just the beginning. Imagine, for a moment, having a longtermist president. Or imagine longtermism becoming the driving ideology of a new political party that gains a majority in Congress and votes on policies aligned with Bostrom’s vision of digital people in simulations, or Hanson’s suggestion about underground bunkers populated with hunter-gatherers, or MacAskill’s view on climate change. In fact, as a recent UN Dispatch article notes, the United Nations itself is already becoming an arm of the longtermist community:

The foreign policy community in general and the … United Nations in particular are beginning to embrace longtermism. Next year at the opening of the UN General Assembly in September 2023, the Secretary General is hosting what he is calling a Summit of the Future to bring these ideas to the center of debate at the United Nations.

This point was driven home in a podcast interview with MacAskill linked to the article. According to MacAskill, the upcoming summit could help “mainstream” the longtermist ideology, doing for it what the first “Earth Day” did for the environmental movement in 1970. Imagine, then, a world in which longtermism — the sorts of ideas discussed above — become as common and influential as environmentalism is today. Bankman-Fried and the others are hoping for exactly this outcome.

This is only a brief snapshot of the community from which longtermism has sprung, along with some of the central ideas embraced by those associated with it. Not only has the longtermist community been a welcoming home to people who have worried about “dysgenic pressures” being an existential risk, supported the “men’s rights” movement, generated fortunes off Ponzi schemes and made outrageous statements about underpopulation and climate change, but it seems to have made little effort to foster diversity or investigate alternative visions of the future that aren’t Baconian, pro-capitalist fever-dreams built on the privileged perspectives of white men in the Global North. Indeed, according to a 2020 survey of the Effective Altruism community, 76% of its members are white and 71% are male, a demographic profile that I suspect is unlikely to change in the future, even as longtermism becomes an increasingly powerful force in the global village.

By understanding the social milieu in which longtermism has developed over the past two decades, one can begin to see how longtermists have ended up with the bizarre, fanatical worldview they are now evangelizing to the world. One can begin to see why Elon Musk is a fan of longtermism, or why leading “new atheist” Sam Harris contributed an enthusiastic blurb for MacAskill’s book. As noted elsewhere, Harris is a staunch defender of “Western civilization,” believes that “We are at war with Islam,” has promoted the race science of Charles Murray — including the argument that Black people are less intelligent than white people because of genetic evolution — and has buddied up with far-right figures like Douglas Murray, whose books include “The Strange Death of Europe: Immigration, Identity, Islam.”

It makes sense that such individuals would buy-into the quasi-religious worldview of longtermism, according to which the West is the pinnacle of human development, the only solution to our problems is more technology and morality is reduced to a computational exercise (“Shut-up and multiply”!). One must wonder, when MacAskill implicitly asks “What do we owe the future?” whose future he’s talking about. The future of indigenous peoples? The future of the world’s nearly 2 billion Muslims? The future of the Global South? The future of the environment, ecosystems and our fellow living creatures here on Earth? I don’t think I need to answer those questions for you.

If the future that longtermists envision reflects the community this movement has cultivated over the past two decades, who would actually want to live in it?

Read more

from Émile P. Torres on “existential risk” and the future