There are a lot of major problems today with tangible, real-world consequences. A short list might include terrorism, U.S.-Russian relations, climate change and biodiversity loss, income inequality, health care, childhood poverty, and the homegrown threat of authoritarian populism, most notably associated with the presumptive nominee for the Republican Party, Donald Trump.

Yet if you’ve been paying attention to the news for the past several years, you’ve almost certainly seen articles from a wide range of news outlets about the looming danger of artificial general intelligence, or “AGI.” For example, Stephen Hawking has repeatedly expressed that “the development of full artificial intelligence could spell the end of the human race,” and Elon Musk — of Tesla and SpaceX fame — has described the creation of superintelligence as “summoning the demon.” Furthermore, the Oxford philosopher and director of the Future of Humanity Institute, Nick Bostrom, published a New York Times best-selling book in 2014 called Superintelligence, in which he suggests that the “default outcome” of building a superintelligent machine will be “doom.”

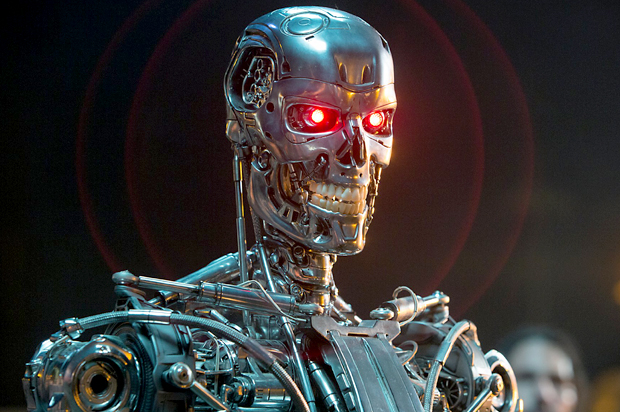

What’s with all this fear-mongering? Should we really be worried about a takeover by killer computers hell-bent on the total destruction of Homo sapiens? The first thing to recognize is that a Terminator-style war between humanoid robots is not what the experts are anxious about. Rather, the scenarios that keep these individuals awake at night are far more catastrophic. This may be difficult to believe but, as I’ve written elsewhere, sometimes truth is stranger than science fiction. Indeed, given that the issue of AGI isn’t going anywhere anytime soon, it’s increasingly important for the public to understand exactly why the experts are nervous about superintelligent machines. As the Future of Life Institute recently pointed out, there’s a lot of bad journalism about AGI out there. This is a chance to correct the record.

Toward this goal, step one is to realize is that your brain is an information-processing device. In fact, many philosophers talk about the brain as the hardware — or rather, the “wetware” — of the mind, and the mind as the software of the brain. Directly behind your eyes is a high-powered computer that weighs about three pounds and has roughly the same consistency as Jell-o. It’s also the most complex object in the known universe. Nonetheless, the rate at which it’s able to process information is much, much slower than the information-processing speed of an actual computer. The reason is that computers process information by propagating electrical potentials, and electrical potentials move at the speed of light, whereas the fastest signals in your brain travel at around 100 miles per second. Fast, to be sure, but not nearly as fast as light.

Consequently, an AGI could think about the world at speeds many orders of magnitude faster than our brains can. From the AGI’s point of view, the outside world — including people — would move so slowly that everything would appear almost frozen. As the theorist Eliezer Yudkowsky calculates, for a computer running a million times faster than our puny brains, “a subjective year of thinking would be accomplished for every 31 physical seconds in the outside world, and a millennium would fly by in eight-and-a-half hours.”

Already, then, an AGI would have a huge advantage. Imagine yourself in a competition against a machine that has a whole year to work through a cognitive puzzle for every 31 seconds that you spend trying to think up a solution. The mental advantage of the AGI would be truly profound. Even a large team of humans working together would be no match for a single AGI with so much time on its hands. Now imagine that we’re not in a puzzle-solving competition with an AGI but a life-and-death situation in which the AGI wants to destroy humanity. While we struggle to come up with strategies for keeping it contained, it would have ample time to devise a diabolical scheme to exploit any technology within electronic reach for the purpose of destroying humanity.

But a diabolical AGI isn’t — once again — what many experts are actually worried about. This is a crucial point that the Harvard psychologist Steven Pinker misses in a comment about AGI for the website Edge.org. To quote Pinker at length:

“The other problem with AGI dystopias is that they project a parochial alpha-male psychology onto the concept of intelligence. Even if we did have superhumanly intelligent robots, why would they want to depose their masters, massacre bystanders, or take over the world? Intelligence is the ability to deploy novel means to attain a goal, but the goals are extraneous to the intelligence itself: being smart is not the same as wanting something. History does turn up the occasional megalomaniacal despot or psychopathic serial killer, but these are products of a history of natural selection shaping testosterone-sensitive circuits in a certain species of primate, not an inevitable feature of intelligent systems.” Pinker then concludes with, “It’s telling that many of our techno-prophets can’t entertain the possibility that artificial intelligence will naturally develop along female lines: fully capable of solving problems, but with no burning desire to annihilate innocents or dominate the civilization.”

Unfortunately, such criticism misunderstands the danger. While it’s conceptually possible that an AGI really does have malevolent goals — for example, someone could intentionally design an AGI to be malicious — the more likely scenario is one in which the AGI kills us because doing so happens to be useful. By analogy, when a developer wants to build a house, does he or she consider the plants, insects, and other critters that happen to live on the plot of land? No. Their death is merely incidental to a goal that has nothing to do with them. Or consider the opening scenes of The Hitchhiker’s Guide to the Galaxy, in which “bureaucratic” aliens schedule Earth for demolition to make way for a “hyperspatial express route” — basically, a highway. In this case, the aliens aren’t compelled to destroy us out of hatred. We just happen to be in the way.

The point is that what most theorists are worried about is an AGI whose values — or final goals — don’t fully align with ours. This may not sound too bad, but a bit of reflection shows that if an AGI’s values fail to align with ours in even the slightest ways, the outcome could very well be, as Bostrom argues, doom. Consider the case of an AGI — thinking at the speed of light, let’s not forget — that is asked to use its superior intelligence for the purpose of making humanity happy. So what does it do? Well, it destroys humanity, because people can’t be sad if they don’t exist. Start over. You tell it to make humanity happy, but without killing us. So it notices that humans laugh when we’re happy, and hooks up a bunch of electrodes to our faces and diaphragm that make us involuntarily convulse as if we’re laughing. The result is a strange form of hell. Start over, again. You tell it to make us happy without killing us or forcing our muscles to contract. So it implants neural electrodes into the pleasure centers of everyone’s brains, resulting in a global population in such euphoric trances that people can no longer engage in the activities that give life meaning. Start over — once more. This process can go on for hours. At some point it becomes painfully obvious that getting an AGI’s goals to align with ours is going to be a very, very tricky task.

Another famous example that captures this point involves a superintelligence whose sole mission is to manufacture paperclips. This sounds pretty benign, right? How could a “paperclip maximizer” pose an existential threat to humanity? Well, if the goal is to make as many paperclips as possible, then the AGI will need resources to do this. And what are paperclips composed of? Atoms — the very same physical stuff out of which your body is composed. Thus, for the AGI, humanity is nothing more than a vast reservoir of easily accessible atoms, atoms, atoms. As Yudkowsky eloquently puts it, “The [AGI] does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.” And just like that, the flesh and bones of human beings are converted into bendable metal for holding short stacks of paper.

At this point, one might think the following, “Wait a second, we’re talking about superintelligence, right? How could a truly superintelligent machine be fixated on something so dumb as creating as many paperclips as possible?” Well, just look around at humanity. By every measure, we are by far the most intelligent creatures on our planetary spaceship. Yet our species is obsessed with goals and values that are, when one takes a step back and peers at the world with “new eyes,” incredibly idiotic, perplexing, harmful, foolish, self-destructive, other-destructive, and just plain weird.

For example, some people care so much about money that they’re willing to ruin friendships, destroy lives and even commit murder or start wars to acquire it. Others are so obsessed with obeying the commandments of ancient “holy texts” that they’re willing to blow themselves up in a market full of non-combatants. Or consider a less explicit goal: sex. Like all animals, humans have an impulse to copulate, and this impulse causes us to behave in certain ways — in some cases, to risk monetary losses and personal embarrassment. The appetite for sex is just there, pushing us toward certain behaviors, and there’s little we can do about the urge itself.

The point is that there’s no strong connection between how intelligent a being is and what its final goals are. As Pinker correctly notes above, intelligence is nothing more than a measure of one’s ability to achieve a particular aim, whatever it happens to be. It follows that any level of intelligence — including superintelligence — can be combined with just about any set of final goals — including goals that strike us as, well, stupid. A superintelligent machine could be no less infatuated with obeying Allah’s divine will or conquering countries for oil as some humans are.

So far, we’ve discussed the thought-speed of machines, the importance of making sure their values align with ours, and the weak connection between intelligence and goals. These considerations alone warrant genuine concern about AGI. But we haven’t yet mentioned the clincher that makes AGI an utterly unique problem unlike anything humanity has ever encountered. To understand this crucial point, consider how the airplane was invented. The first people to keep a powered aircraft airborne were the Wright brothers. On the windy beaches of North Carolina, they managed to stay off the ground for a total of 12 seconds. This was a marvelous achievement, but the aircraft was hardly adequate for transporting goods or people from one location to another. So, they improved its design, as did a long lineage of subsequent inventors. Airplanes were built with one, two, or three wings, composed of different materials, and eventually the propeller was replaced by the jet engine. One particular design — the Concorde — could even fly faster than the speed of sound, traversing the Atlantic from New York to London in less than 3.5 hours.

The crucial idea here is that the airplane underwent many iterations of innovation. Problems that arose in previous designs were improved upon, leading to increasingly safe and reliable aircraft. But this is not the situation we’re likely to be in with AGI. Rather, we’re likely to have one, and only one, chance to get all the problems mentioned above exactly right. Why? Because intelligence is power. For example, we humans are the dominant species on the planet not because of our long claws, sharp teeth and bulky musculatures. The key difference between Homo sapiens and the rest of the Animal Kingdom concerns our oversized brains, which enable us to manipulate and rearrange the world in incredible ways. It follows that if an AGI were to exceed our level of intelligence, it could potentially dominate not only the biosphere, but humanity as well.

Even more, since creating intelligent machines is an intellectual task, an AGI could attempt to modify its own code, a possibility known as “recursive self-improvement.” The result could be an exponential intelligence explosion that, before one has a chance to say “What the hell is happening?,” yields a super-super-superintelligent AGI, or a being that towers over us to the extent that we tower over the lowly cockroach. Whoever creates the first superintelligent computer — whether it’s Google, the U.S. government, the Chinese government, the North Korean government, or a lone hacker in her or his garage — they’ll have to get everything just right the first time. There probably won’t be opportunities for later iterations of innovation to fix flaws in the original design, if there are any. When it comes to AGI, the stakes are high.

It’s increasingly important for the public to understand the nature of thinking machines and why some experts are so worried about them. Without a grasp of these issues, claims like “A paperclip maximizer could destroy humanity!” will sound as apocalyptically absurd as “The Rapture is near! Save your soul while you still can!” Consequently, organizations dedicated to studying AGI safety could get defunded or shut down, and the topic of AGI could become the target of misguided mockery. The fact is that if we manage to create a “friendly” AGI, the benefits to humanity could be vast. But if we fail to get things right on the first go around, the naked ape could very well end up as a huge pile of paperclips.