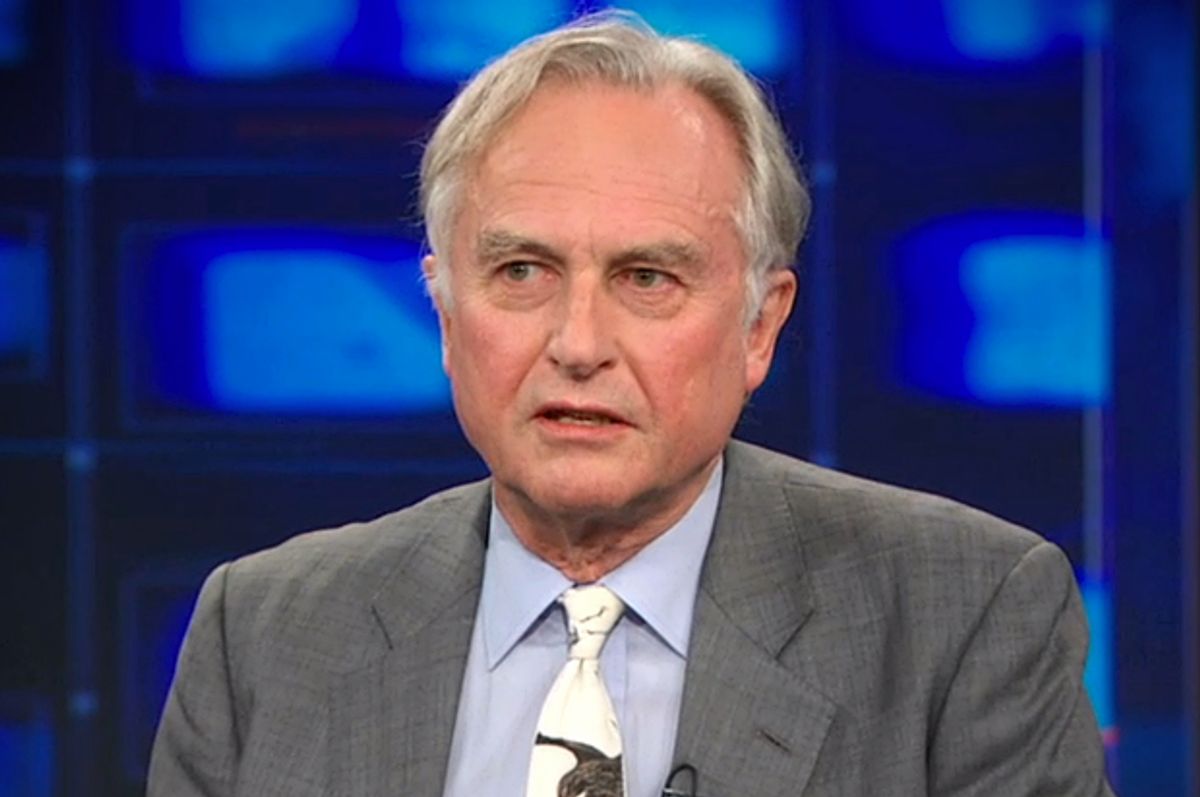

In history books, Oxford zoologist Richard Dawkins will be known for his contributions to science. But you’d never know that based on the last two years of his media coverage, which have centered on a series of controversial tweets, mostly about women and Islam. The tweets, and Dawkins’ attempts to defend them, have provoked fierce debate between atheists about whether his visibility as an advocate for secularism has become a liability to the cause. The story raises challenging questions about aging public figures in an era of instantaneous communications.

In history books, Oxford zoologist Richard Dawkins will be known for his contributions to science. But you’d never know that based on the last two years of his media coverage, which have centered on a series of controversial tweets, mostly about women and Islam. The tweets, and Dawkins’ attempts to defend them, have provoked fierce debate between atheists about whether his visibility as an advocate for secularism has become a liability to the cause. The story raises challenging questions about aging public figures in an era of instantaneous communications.

What’s at Stake

Richard Dawkins received an endowed chair at Oxford and honorary doctorates from 12 other universities for good reason. His research and writing about biological and cultural evolution radically changed the thinking of his generation. Two game-changing ideas he advanced were the notion that evolution operates at the level of genes rather than individuals, and that natural selection operates outside of biology—determining, for example, which bits of culture spread or get handed down to future generations, and which don’t. Dawkins popularized these ideas in his book, "The Selfish Gene," in which he coined the term meme, defined as a unit of cultural information that replicates, like a melody, fad, joke, or religious belief. The term meme, itself, replicated widely along with the concept it represents, spawning a new field of study.

Today concepts like viral marketing are commonplace, and engineers write computer code that uses natural selection to generate novel solutions to complex problems. But at the time Dawkins first spelled them out, his ideas were radical. They built on the work of scientific greats including Charles Darwin, Ronald Fisher, George C. Williams, Bertrand Russell and Nikolaas Tinbergen, but Dawkins combined their findings to advance novel lines of thinking. If citation counts are any indicator, his scholarship laid the foundation for a generation of scientists who have gone on to test or critique his ideas, replacing some and refining others—which is exactly how scientific inquiry functions at its best.

After the September 11, 2001 attack by Islamic terrorists who used airliners full of people and jet fuel as bombs, killing 3,000, Dawkins shifted focus and took up trailblazing of another sort. He used (and to some extent spent) his standing as an academic scientist to create a vigorous public critique of religion as a source of falsehood and harm. Together with journalist Christopher Hitchens, philosopher Daniel Dennett and neuroscientist Sam Harris, he became known as one of the “four horsemen” of the New Atheist movement. As in the sciences, Dawkins and his colleagues set in motion waves of change, and as in science their work has provoked a fierce critique and debate among other intellectuals, many of whom agree in essence with their thinking but find parts oversimplified, overgeneralized or incomplete.

A View from Gen Y

As someone who believes that we each get one precious life, someone fascinated with understanding the intricacies of the natural world and the human mind, I owe Dawkins a debt of gratitude. And when, once, I found myself passing time with him before a speaking event, his demeanor as a seemingly genteel but awkward introvert made me even more grateful that he has been willing to tolerate such a high level of conflict and publicity for so long in the service of change.

But when I said as much to my college-age daughter, Brynn, she wrinkled her nose. “I think he’s a bit of an ass,” she shot back. I winced, but I knew what she was talking about. Brynn’s primary impression of Dawkins comes from those awkward comments, often about women, that make their way from Twitter to Facebook to news and opinion sites where indignant commentators call him a bigot or sexist and suggest that he should step down.

As an introvert and nerd-girl, Brynn calls things as she sees them. If our strengths and weaknesses are two sides of the same coin, one strength of introverts is that they have less need for social approval than extroverts and so speak uncomfortable truths when others won’t. (A corresponding down side is that they often exhibit less social finesse and sensitivity in how and what they say.) One of the strengths of nerds, including scientists, is that their obsession with figuring out what’s real makes them less prone to self-delusion than many of us. (A corresponding down side is that they often don’t consider the potential harms of their hypotheses and discoveries.)

Brynn is just a college student, a possibly aspiring scientist, but her blunt assessment of Dawkins was a mirror image of his qualities and behaviors that prompted her reaction. Part of the reason Dawkins has been so influential in positive ways is that he has a compelling drive to say what he thinks, whether his opinion is popular or not. But coupled with Twitter, this quality has created a public relations storm that threatens to undermine his legacy as a pioneer and the ability of his foundation to advance secular values including the study of religion as a natural phenomenon.

The Perils of Social Media

All of us have thoughts that are insensitive or reactive and driven by emotion, or simply not well thought out, or a product of our blind spots. Twitter is the ultimate means of blurting those thoughts to the world, which means you can’t take them back. Kids who come of age with social media learn this young, often by trial and error. Childhood and adolescence can be thought of as laboratories for trying on social behaviors and figuring out what works—and what doesn’t—with the goal of having gotten through a bunch of the errors before they really count. Mercifully, at age 12 or 15, the consequences of blurting 140 characters to the world are usually minor.

But consider those of us who grew up with party-line phones and manual typewriters. For us, the trial-and-error starts at a time when our utterances have far heftier consequences, especially if we have been successful enough in some arena to attract a following. If we care about reaching an audience, we probably have some hazy notion that social media are where it’s at, that we should be tweeting and posting to Facebook and Instagram (but who knows what and when).

As old dogs it gets tougher to learn new tricks. Add to this the fact that the brain’s executive function, which was immature but growing during those adolescent years (one reason we forgive teen peccadillos), begins to decline as middle age winds down. It gets harder to pay attention and we become less good at editing impulses.

Social media have inherent social risks, regardless of age, but the intersection between aging and social media is particularly perilous, and the more stature and visibility a person has attained, the more he or she has to lose. Many public figures, like politicians and corporate executives, have staff to vet their communications and institutional rules intended to prevent the kind of reputation damage that comes from broadcasting ideas that are unseemly or not thought out or badly expressed. Others—freelance writers like me, documentary filmmakers, actors, and Oxford professors emeritus are largely on our own.

Realities We’d Like to Ignore

Sometime between middle age and end of life, as bone and muscle composition change, most people make self-protective decisions to scale back physical activities that feel risky: skiing, surfing, lifting big rocks, climbing ladders. At some point most people stop driving as reaction time, focus, and visual acuity decline, although the age at which the risks exceed the benefits varies widely.

Cognitive changes related to aging are less obvious and understood than physical changes, but no less significant. Some aspects of information processing begin to decline in the 30s, for example processing speed. Verbal ability remains stable the longest, which can mask changes in other kinds of processing (and allow politicians to remain in office indefinitely). In one small pilot study, 90 percent of adults over 70 show some measurable evidence of cognitive decline; more conservative and complex measures suggests that 22 to 50 percent experience a condition that researchers term Mild Cognitive Impairment. Almost a third of us will eventually die with dementia, and given the indicators I assume that includes me.

Staying In for the Long Haul

Prudence dictates that at some point each of us with an audience should step out of social media and eventually the public eye. But between cognitive peak in middle age and the point at which even the most innovative thinker or eloquent orator should focus on long walks and loved ones lies a stretch as long as 20 to 40 years.

Developmental theorist Erik Erickson described middle adulthood, meaning the years from age 35 to 60 or thereabouts, as the time of “generativity,” the stage at which we are most creative and productive and make our mark. He believed that the primary goal of later years was “integrity,” an inward process of synthesizing values and experiences and preparing for mortality.

But defining clean stages oversimplifies the matter. During the integrity years, those of us who care about discovery and society can contribute in powerful, important ways by staying engaged, and in fact for some of us that will be our most generative time. However, this means actively managing and minimizing the risks associated with the biology of aging. It can be done--with a little help from our friends (and, perhaps, other editorial staff).

Who’s Got Your Back?

Managing aging wisely and well may have some parallels with managing drinking. Back in my youth, people drove themselves home after parties and sometimes paid the price. My daughter’s peers have a totally different ethic. They agree in advance who is taking care of whom; they trade off; and they step it up when one of their circle is going through a rough patch. When you are a college student at a party, it really, really helps to have friends watching out for you--friends who know you well enough to recognize when you’re not at your best, who trust you enough to tell you so, who are smart and strong enough to take your keys if need be, and who love you enough to see that you get home safe.

The value of this kind of support cannot be overstated, even at the very end of life (or coherence). Consider, at the one extreme, Ronald Reagan, whose team hid his dementia from all but the most discerning observers while he finished out his presidency. Or consider, at the other extreme, philosopher Antony Flew. In the absence of someone to confront his dementia and protect his legacy, Flew had his confusion exploited by evangelists who leveraged his stature to elevate their own, and the final book bearing his name contained ideas that would have shamed him in his prime.

The presence of an honest, protective, confrontive community of love and support makes a world of difference during college and at the end of life, but also at every point in between. As poet Gwendolyn Brooks put it, “We are each other's business; we are each other's harvest; we are each other's magnitude and bond.”

When I mentioned to my husband (and chief supporter and critic) that I was wrestling with this article, he asked, “Are you now adding a theme of aging and cognition to your writing topics?”

“It seems so,” I responded. “This is the fourth time it has come up.”

“That’s good,” he said. “You’ve been paying a lot of attention to this issue lately.” He’s right. With our daughters off to college I find myself keenly aware of the next phase, and how quickly it will be over. “Besides,” he added. “If you keep writing about it, as you start declining your readers will recognize what’s happening.” We both laughed. For some reason, it was a comforting thought.

Maybe I look forward to having more excuse for my inevitable failures of logic or compassion or fact-checking. As an independent writer, I find myself cringing regularly about something that I’ve said in print, something that I wish I could clarify or nuance or even retract, but can’t because my words have replicated across the internet. Occasionally I have to chase from site to site, confessing in a series of humiliating emails that something I wrote is factually inaccurate or patently unfair, and requesting corrections. I keep reminding myself that it would feel even worse to let the errors stand, and that the power of the web to replicate ideas is a good thing, and that I am more than my lapses.

The Lucky Ones

Recently I sent Brynn a video based on an excerpt from Richard Dawkins’ book "Unweaving the Rainbow." I wanted her to know that Dawkins is more than his worst moments and to remind her, by analogy, that she is more than hers, as I am more than mine.

The video opens with a small brown bird falling from a nest and floundering. "We are going to die,” Dawkins begins, “and that makes us the lucky ones.”

"Most people are never going to die because they are never going to be born. The potential people who could have been here in my place but who will in fact never see the light of day outnumber the sand grains of Sahara. Certainly those unborn ghosts include greater poets than Keats, scientists greater than Newton. We know this because the set of possible people allowed by our DNA so massively exceeds the set of actual people. In the teeth of these stupefying odds it is you and I, in our ordinariness, that are here."

Brynn messaged me back a couple of days later: “The Dawkins video was pretty beautiful.” I knew she would get it. Even in our ordinariness, amidst our faults and foibles and glitches and blurts, we create beauty, and knowledge and love—because that is what it means to be alive. And as Richard Dawkins said, this complicated and awkward but ordinary mix makes us the lucky ones.

Shares