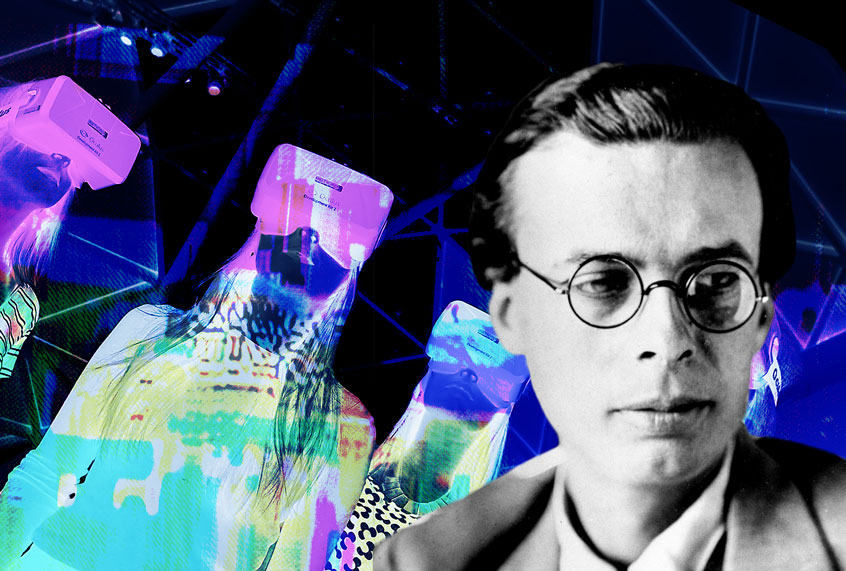

In 1958 the journalist Mike Wallace interviewed Aldous Huxley, the British author best known for writing “Brave New World.” This dystopian sci-fi novel, published in 1932, takes place in the fictional and future World State society, where human beings are produced in laboratories and assigned to different classes based on their intelligence and physical gifts.

In the Wallace interview Huxley spoke of threats to democracy, with an eye on the present and the future. Rather than focus on political and social inequalities, he pointed the finger at technologies we use willingly every day—especially those that have the potential to distract us, like television. “We mustn’t be caught by surprise by our own advancing technology,” he said.

Huxley emphasized that to remain in power one must obtain the “consent of the ruled.” And to get such consent, he said, requires “new techniques of propaganda” that have the ability to bypass the “rational side of man and appeal to his subconscious and his deeper emotions, and his physiology even, … making him actually love his slavery.” Today our ability to make rational choices, including political ones, is more compromised than ever. Most of us believe that tech companies aggregating hundreds of data points about us everyday do it to provide us with traditional, “personalized” advertising. Many of us are okay with that, and don’t mind our data being collected to sell us products like soap or cars, or to recommend new music, movies, and TV shows. Fewer of us are aware that companies use our data to sell us political agendas and politicians, often by spreading propaganda and disinformation, intentionally misleading material tailored to exploit our vulnerabilities and foibles. We never consented to having our democracies taken over by the same process that entices us to purchase one brand (Starbucks, let’s say) over another (Dunkin’ Donuts).

In this way we’ve become polarized by digital technologies that run our personal data through opaque algorithms, making billions in advertising revenue for the companies that produce them, while working against the basic values of people-centered technology. Polarization especially undermines our control of democratic functions. People, not machines or corporations, should have power over their own political lives.

If we can no longer trust what we see—if in fact we are even manipulated by what we see—we must learn to understand, confront, and overcome the forces that threaten our individual minds and hopes for democracy. Indeed, human beings, bots, and microtargeting algorithms all play a role today in closing us off from facts, multiple points of view, and the contexts behind the information we see. As Huxley said in 1958: “The whole democratic procedure … is based on conscious choice [made] on rational ground.”

Democracy also depends on public debate. It assumes that the playing field for such debate is level: we may have socioeconomic differences, but each of us should have equal opportunity to vote according to our experience, our values, and our best judgment. As citizens we may have different political views, but the events that inform our perspectives should be accessible and commonly understood.

Transparency, central to any democratic system, depends on having equal information—not information “suggested” for us based on surveillance and data tracking or gathering. But tech giants carry out these pervasive practices in hundreds of hidden ways. The usual suspects, Facebook and Google, are not the only guilty ones. Even online newspapers we rely on for unbiased analysis and reporting, including those like the Guardian that discuss problems with surveillance, host tracking software similar to what these very companies use.

Whatever passes for transparency today seems one-directional: Tech companies know everything about us, but we know almost nothing about them. So how can we be sure that the information they feed us hasn’t been skewed and framed based on funding models and private news industry politics? Data breaches may happen everyday, but how often do they involve citizens learning about government spying? Think of how rarely we learn of revelations like the ones the whistleblower Edward Snowden made in 2013.

Big technology companies, particularly Facebook and Google, have a huge role to play in the political lives of people across the world. Mark Zuckerberg could privately press a button to tell Donald Trump or Hillary Clinton supporters how to vote and, without anyone knowing, sway well over 300,000 votes—enough to tilt the balance in a divided election.

Google’s impact may be even greater. Although these companies profit from the public’s use of their platforms, it remains unclear whether they acknowledge the public responsibility to be forthcoming about their political impact. Facebook, with over 2 billion users across the world, has but two-dozen employees working for its global governance team (according to several former employees I spoke with off the record). It’s one thing to profit from hosting a place where we do public things like socialize or read the news, but it’s quite another to be accountable to the public. Whether Facebook is accountable to its users and the principle of democracies seems questionable at best.

Politics and Media: A Virtual Reality?

At the time of his TV appearance in 1958, Huxley had just finished writing a series of essays titled “Enemies of Freedom.” At the start of the interview Wallace asked him to clarify what or whom he referred to as an enemy. Huxley did not name any lone sinister individual but instead talked about “impersonal forces … pushing in the direction of less and less freedom.” He suggested that “technological devices” could be used to accelerate the process of “imposing control.” Today we often refer to these impersonal forces as ”bad actors” — the now-defunct Cambridge Analytica, for instance, and the Russian government, which has been accused of breaking legal and ethical boundaries to interfere in the 2016 US presidential election.

How have shifts in the production and use of media and technology come to impose control and thus threaten democracies? To get some answers, I interviewed David Axelrod, the chief strategist behind Barack Obama’s successful outsider campaign for the US presidency in 2008. Axelrod is now a political analyst and host on CNN. He bluntly points out that politics in the United States and much of the world has turned into a virtual reality—that the internet and television, which have converged into one, now feed the viewer with perspectives and information that “affirm rather than inform.”

Just a few decades ago, and not only in the United States, Axelrod says, the few news networks that existed tended to focus on the middle ground, covering the news in ways that were far more similar than different to one another. This tendency in the United States carried over from the controversial and later abandoned Federal Communications Commission (FCC) fairness doctrine that originated in the late 1940s, when the mainstream TV networks were ABC, CBS, and NBC, as they remain today. The fairness doctrine required broadcast licensees to present controversial topics in ways that the FCC deemed honest, balanced, and objective.

Today, however, news sources are brands. In the United States, Fox News speaks overwhelmingly to conservatives, including the current presidential administration, in an American version of what Axelrod describes as “state television.” MSNBC positions itself as the resistance. Across the world, we see similar examples. Increasingly, viewers know what perspective they will receive without even listening to a single word uttered on any program. And with the internet, the situation is no different. We watch and view content that reinforces our positions, all aided by algorithms that recommend and personalize content.

How interesting, I thought, that Axelrod characterized CNN as a middle-of-the-road network when its reporting tends to lean toward a critical view of President Trump’s transgressions. CNN, however, often excludes political positions that are significantly right or left of center. Does neutrality mean the absence of these voices, and is that erasure healthy for a democracy? Is the exclusion of these perspectives evidence that CNN is out of touch, given that the populist and supposed “anti-establishment” positions of politicians, such as Trump or Bernie Sanders, remain widely supported?

These questions bring other troubling ones to mind: Are we, as citizens, viewers, and users, really interested in unbiased, even objective, content? Is there even such a thing as impartiality? When Axelrod and I talked at some length about CNN’s political analyst Chris Cuomo, he described Cuomo as a journalist who does not take sides. Then he questioned whether there is “a market for a robust middle ground, one that challenges political positions taken by conservatives and liberals alike.” Cuomo’s Prime Time television show debuted in June 2018, airing at the same time as popular partisan shows hosted by MSNBC’s Rachel Maddow and Fox News’s Sean Hannity. Some viewed CNN’s decision to put Cuomo in that slot as a “suicide mission.” Nielsen ratings for January 2019 published in the Washington Times showed Cuomo, in his best month to date, still trailing with an average of 1.64 million nightly viewers to 3.25 million and 3.04 million (respectively) for Maddow and Hannity

Axelrod and I next looked at the controversy surrounding Donald Trump and the media. His 2016 election was rife with questions and concerns regarding truth, information, and misinformation, which have continued to plague his early years in office. And yet Trump has continued to challenge and deride the mainstream media’s “fake news” coverage of his administration—and both the left and right wings of the political spectrum continue to critique mainstream media. The president has recognized, according to Axelrod, that “if you are willing to light yourself on fire, or light someone else on fire, you can dominate any news cycle. People check the president’s Twitter feed regularly for a fix, and I’m not just talking about people who are supporters.”

Whether we speak of reporting on partisan television, online platforms, or even Axelrod’s CNN, Trump is at the center of it. He continues to receive far more coverage on television and via social media platforms like Twitter than any other US politician. This has worked perfectly so far to solidify a political base, capture and maintain the attention of every voter, and disorient the opposition.

How can such concentrated power emerge in a media and technology environment where so much information is available? Discussing Trump with me, Axelrod paraphrased the perspective of late US senator Daniel Patrick Moynihan: “You’re entitled to your own opinion, but you’re not entitled to your own facts.” Describing today’s political experience of media and technology as an “endless orgy of chocolate cake,” Axelrod expressed concern about how the internet has sowed political divisions within and across societies.

Democracies operate slowly, and our societies and leaders need to make sense rather than simply exploit the disorientation that rapidly shifting technologies bring about. These technologies, according to Axelrod, “are coming faster and faster, creating a good deal of anxiety and a great deal of hyperactivity.” It is up to us to intervene, to ensure that the systems we have embraced serve us all.

Part of the challenge will be to look at what’s behind the curtain, the market logic that drives our media and technology. Whether we speak of cable television or the internet, most news comes to us from private, for-profit corporations. These channels are created to make money. Their profits are transacted through our addiction and attention to what we view or click on. And they depend on our continued engagement with it. For example, CNN’s constant framing of its programming as “breaking news” may mean that even if it is politically centrist, it leverages the frenzied sensationalism of a 24/7 news cycle. Axelrod acknowledged this point, agreeing that at the end of the day, if inscrutable private interests take our political systems hostage, then we must find an alternative. There is a role for profit-driven companies in our society, of course, but that role shouldn’t endanger the values and interests of the 99 percent.

Do More Data and More Channels Mean More Democracy?

As my conversation with the man behind Obama illustrates, media environments across the world have changed as more channels, web pages, and apps compete for the attention of citizens. Daniel Kreiss, a scholar of politics and technology at the University of North Carolina, points out to me that the world looks completely different today, even in much of the global South, than it did in the 1960s. In the United States, a few decades ago, one could run a political advertisement on television and reach over 90 percent of the electorate. In Kreiss’s recent book, Prototype Politics, however, he points out that we have witnessed a sea change: “Data has become more important because it helps candidates find the electorate, where they’re paying attention, and get a message out in front of that.”

Most citizens across the world seem to accept personalization, even microtargeting, in their economic lives. Marketing analytics, like techniques that partition messaging and advertising to reach specific consumers (or demographics) have long existed.

What then has changed? First, we’ve experienced the exponential growth of data used not only to target us but also to organize the information we see. Combined with exponentially faster processing and cheaper storage, this rapid growth has made possible a type of fluid, even invisible, ordering of the world. Second, we’ve watched the use of analytics and personalization shift out of the commercial and economic spheres and into our political lives. That shift may compromise a major foundation of democracy, as the ability for the individual to participate politically without coercive manipulation is a central facet of democratic choice.

In the United States and a number of countries across the world, we see a weakening of traditional political parties and the rise of populism. Politicians such as Donald Trump, Rodrigo Duterte in the Philippines, and Recep Tayyip Erdoğan in Turkey express sympathy with aspects of authoritarianism (if not outright adoption of it). They have taken power, especially because many voters experience a sense of betrayal by establishment institutions and politicians. Such figures can rise to power as well through their ability to succeed within a technology and media climate where attention-grabbing content, turned into a spectacle on our Twitter or Facebook feeds, can be exploited for political gain.

Even in the pre-internet era, when television and other media networks were deregulated, we arrived upon a satellite-television world where hundreds of channels replaced a few. Citizens today receive news from channels, networks, webpages, and applications whose numbers have expanded beyond imagination: we all experience the external political world through different “pipes of information.” And yet, despite such a range of choices, different channels are often owned by the same holding company. Massive media corporations such as Disney and Time Warner control the television market, and engage even more viewers, offering more and different options and programs. But, as the media scholar Robert McChesney cautioned in 1998: “The wealthier and more powerful media giants become, the poorer the prospects for participatory democracy.”

Aldous Huxley cautioned us in 1958 not to be surprised by our own advancing technology, its means of fueling propaganda, and its threat to democracy. In 2015, McChesney expressed cautious optimism about how technologies might support democracy: “We are in a position, in some respects for the first time, to make sense of the Internet experience and highlight the cutting-edge issues it poses for society, … to better understand the decisions that society can make about that type of Internet we will have and, accordingly, what type of humans we will be and not be in the future.”

# # #

Excerpted from “Beyond the Valley: How Innovators around the World are Overcoming Inequality and Creating the Technologies of Tomorrow,” by Ramesh Srinivasan. Reprinted with permission from The MIT PRESS. Copyright 2019.